Intel Core i3-9100 4-Core Processor Review

AIXPRT – AI benchmark tool

For the very first time we will be using AIXPRT Community Preview 2, which is a pre-release build of AIXPRT. This is an AI benchmark tool that makes it easier to evaluate a system’s machine learning inference performance by running common image-classification and object-detection workloads.

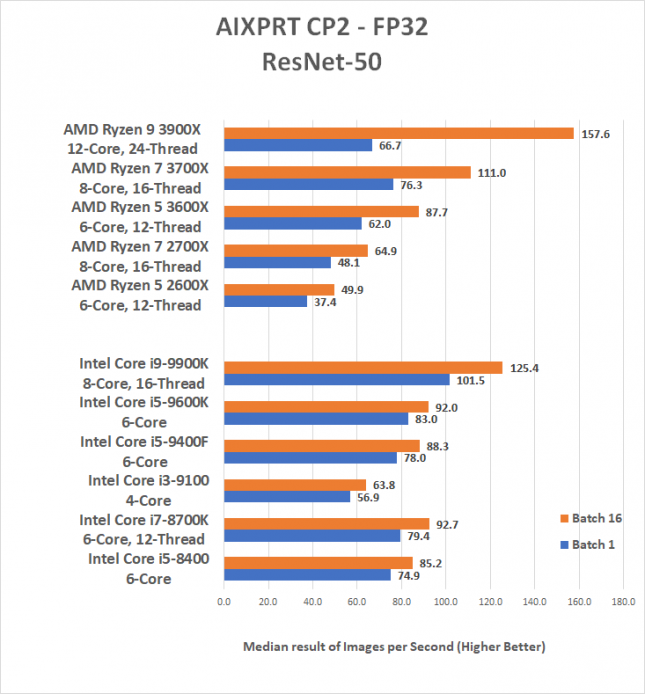

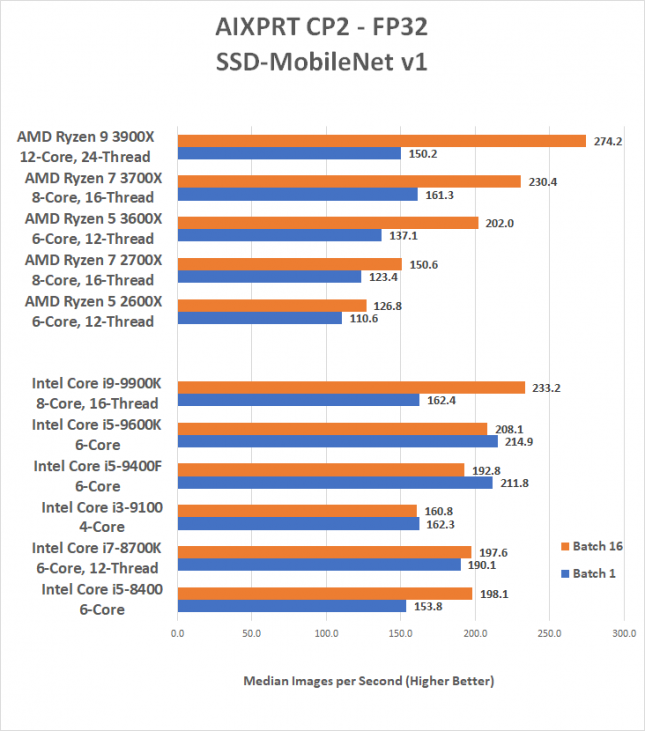

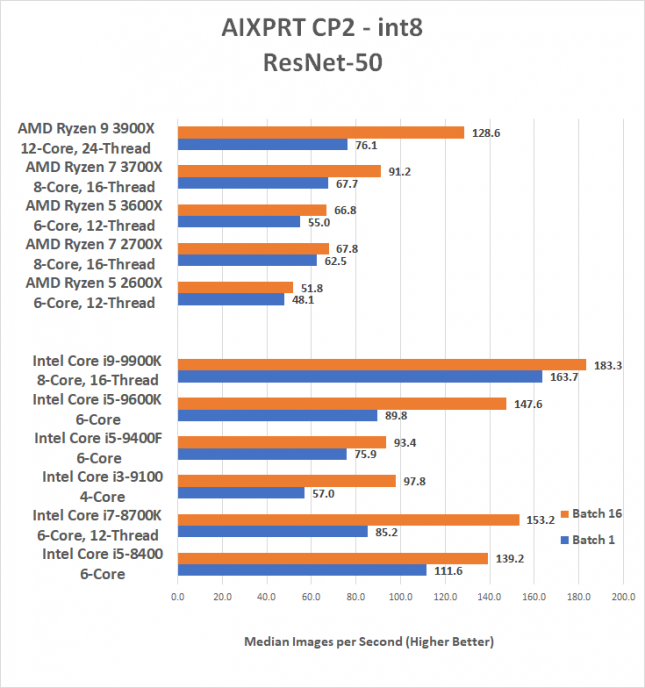

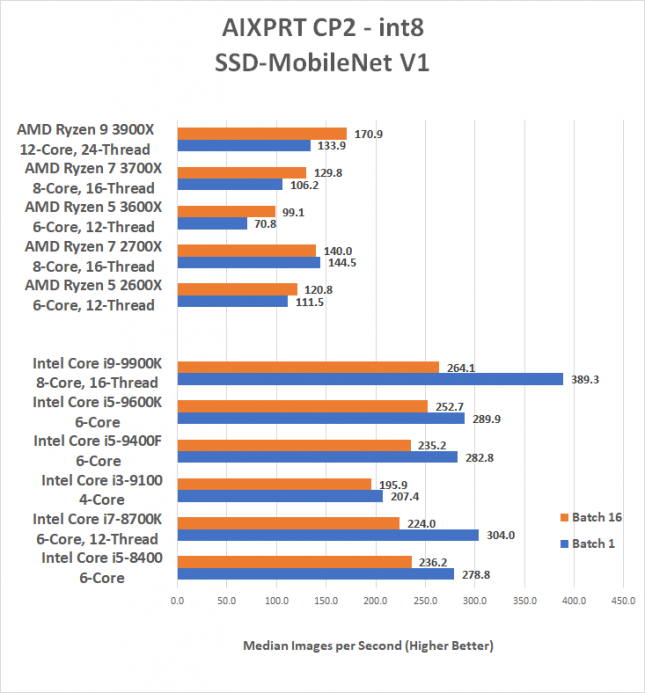

We used the Intel OpenVINO toolkit to run image-classification and object-detection workloads using the ResNet-50 and SSD-MobileNet v1 networks. We tested at FP32 and INT8 levels of precision on our Windows 10 v1903 test systems. The results from this test are shown in batch sizes of 1, 2, 4, 8, 16, and 32. We’ll be charting just the results for batch 1 and batch 16. If someone is looking for the single best inference latency in a client-side use case, batches as low as 1 are usually best. Those looking for maximum throughput on servers would likely be searching for concurrent inferences at larger batch sizes (typically 8, 16, 32, 64, 128). So, our readers will likely be most interested in batch 1 results.

FP32 Results:

INT8 Results: