EVGA GeForce RTX 2070 XC Gaming Graphics Card Review

LuxMark & SiSoftware Sandra

LuxMark v3.1 Benchmark

LuxMark is a OpenCL cross-platform benchmark tool that has been around since 2009. It was intended as a promotional tool for LuxCoreRender and remains a popular benchmark for those interested in OpenCL performance. LuxMark is now based on LuxCore, the LuxRender v2.x API available under Apache Licence 2.0 and freely usable in open source and commercial applications.

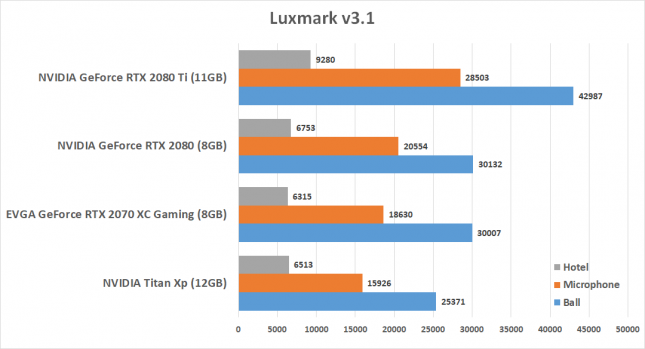

Benchmark Results: The EVGA GeForce RTX 2070 XC and the GeForce RTX 2080 both scored around 30,000 on the Ball scene, but on the microphone and hotel scenes we see the RTX 2080 start to pull away. The NVIDIA Titan XP was able to best the RTX 2070 in just the hotel scene, so raw OpenCL performance is most impressive on Turing.

SiSoftware Sandra GPGPU Testing

We fired up SiSoftware Sandra Titanium 2018 SP2b (version 28.31) to look at GPGPU performance on the new NVIDIA Turing GPU performs. This benchmark isn’t really aimed at gamers, but some will likely find the results interesting.

The first test that we ran was the Scientific Analysis benchmark and it should be noted that used CUDA for the test. We set the Floating-Point precision to ‘normal’ and we charted the results for N-Body Simulations (NBDY), Fast Fournier Transformation (FFT) and General Matrix Multiply (GEMM) operations.

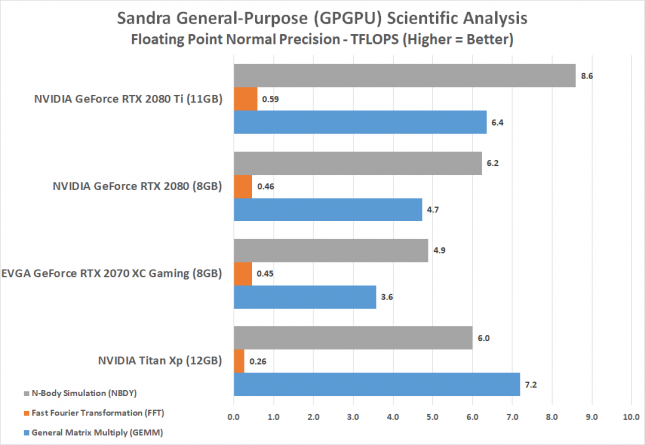

When it comes to scientific number crunching the RTX 2080 and RTX 2080 TI leave the GeForce RTX 2070 behind in the N-Body Simulation and General Matrix Multiply operation tests.

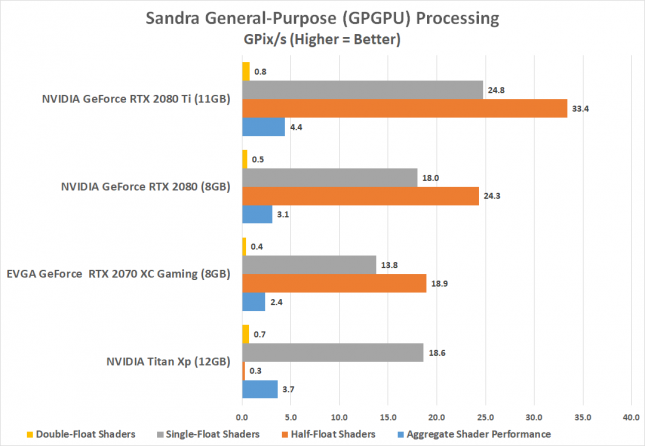

The standard processing test shows just how much more shading performance the RTX 2080 and RTX 2080 Ti have over the new RTX 2070.

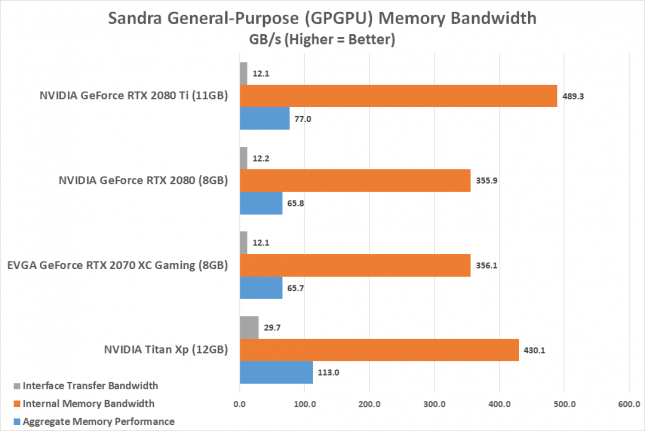

The GPGPU Memory Bandwidth test shows that all three RTX series cards have the same interface transfer bandwidth and that the RTX 2080 and RTX 2070 have the same internal memory bandwidth of right around 360 GB/s.