AMD Radeon RX 590 Roundup – PowerColor, Sapphire and XFX

Temperature, Noise & Power Consumption

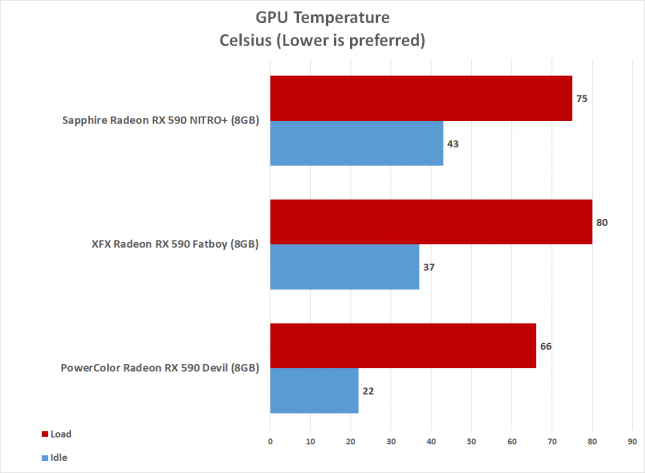

Gaming performance on a graphics card is the most important factor in buying a card, but you also need to be concerned about the noise, temperature and power consumption numbers. Years ago looks at temperatures on graphics card was fairly simple, but these days manufactures have temperature targets defined in the vBIOS. So, you can’t really fault one model running hotter than another when they are set to run that hot from the factory.

- PowerColor Radeon RX 590 Red Devil – 65C

- Sapphire Nitro+ Radeon RX 590 Special Edition – 75C

- XFX Radeon rX 590 Fatboy – 80C

All three of these cards are advertised as having 0dB fans at idle, but for some reason the PowerColor card fans weren’t turning off when the card was under 50C like it was advertised to do. Since the fans were spinning at 1025 RPM at idle the temperature was just 22C when the ambient room temperature was 21C. The XFX Radeon RX 590 Fatboy had an idle temperature of 37C and the Sapphire Nitro+ came in at 43C. At load all the cards topped out within 1C of their target temperature.

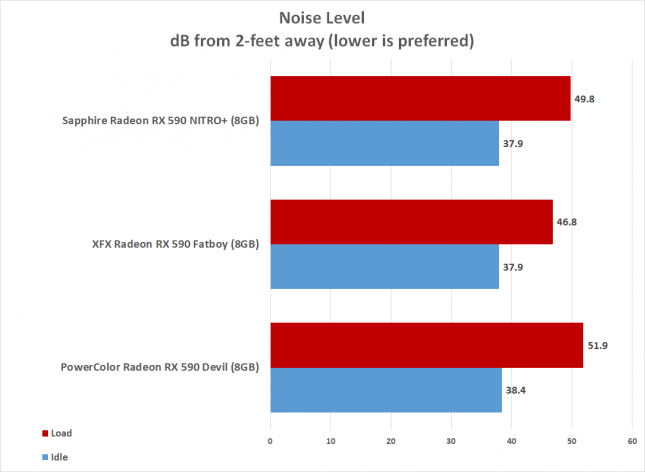

At idle the 0dB cards (Sapphire and XFX) were the quietest and then at load we found the XFX Radeon RX 590 Fatboy to be the quietest at 46.8dB. We played PUBG for two hours on each card and the fans maxed out at 1890RPM on Sapphire, 1865RPM on XFX and 2150 RPM on the PowerColor. We test on an open air test bench, so this chart shows the noise level without the components being in a case.

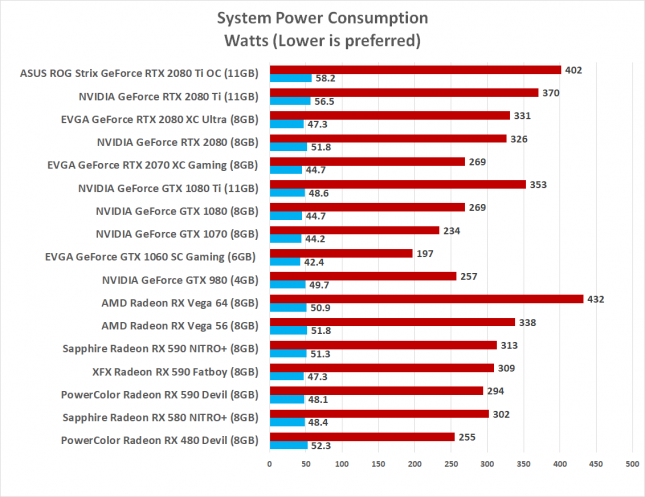

Power Consumption

For testing power consumption, we took our test system and plugged it into a Kill-A-Watt power meter. For idle numbers, we allowed the system to idle on the desktop for 15 minutes and took the reading. For load numbers we ran Rainbow Six Siege at 1440P and recorded the peak power number while the in-game benchmark was running. This is done to ensure the results are repeatable.

Power Consumption Results: We found the Sapphire Nitro+ Radeon RX 590 8GB Special Edition card used the most power and it should be noted that testing was done with all the LED lights on for each card. The XFX Radeon RX 590 Fatboy used just 4 Watts less power at load and then the PowerColor Radeon RX 590 Red Devil used 19 Watts less at load. Variance between GPUs is likely part of the difference here since each GPU has differing amounts of leakage, but the core/memory clocks also play a major role.