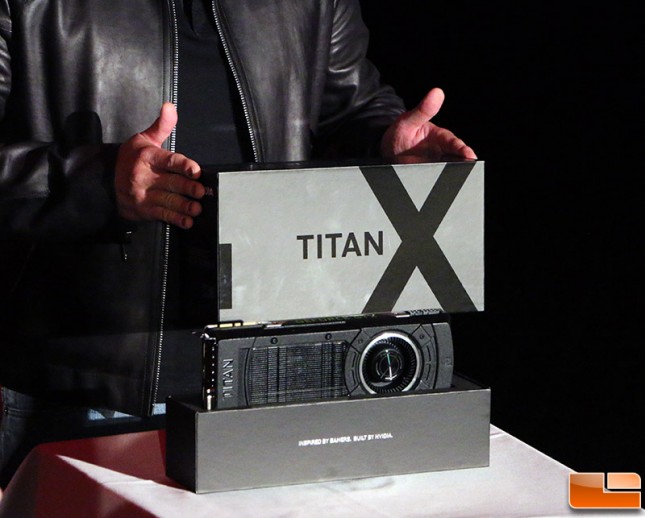

NVIDIA GeForce GTX Titan X 12GB Video Card Overview

Last week Legit Reviews was at the Game Developers Conference (GDC) 2015 where NVIDIA unveiled the GeForce GTX Titan X 12GB graphics card during Epic Games State of Unreal keynote. Later that day we tracked down Jen-Hsun and was able to bring you some of the very first images of the Titan X! We have received a bunch of questions from readers about this card, so we made up our own FAQ page for the GeForce GTX Titan X to help people keep up with the rumors and what we think NVIDIA is doing with the Titan X!

What GPU Powers The Titan X?

The NVIDIA GeForce GTX Titan X is more than likely powered by a single GM200 Maxwell GPU that is affectionately referred to as Big Maxwell by insiders as it is the largest uncut Maxwell core ever taped out and manufactured. The GM200 is rumored as having 3,072 CUDA Cores spread across 24 SMMs along with 12GB of GDDR5 memory running on a 384-bit bus. The clock speeds on the core and memory are unknown at this point in time, but some sites are reporting that it is 100MHz lower than that of a GeForce GTX 980 (1126MHz base/1216MHz boost). Like other Maxwell GPUs the GM200 will be made by TSMC on the 28nm manufacturing process. The die is said to be around 600mm2 and will be one of the largest GPUs ever used on a GeForce GTX graphics card. The end result will be NVIDIA’s most powerful graphics card to date.

What Games need 12GB of frame buffer?

We cant think of any game title that uses close to 12GB of memory at 4K resolutions (3840×2160), but with 12GB of memory you shouldnt have to worry about VRAM running low. Game titles like Assassins Creed Unity with 8x MSAA and the image quality maxed out will use up 6GB of frame buffer on the current GeForce GTX cards, so there is a need for high-end cards to have beyond 4GB of memory here in 2015 and beyond.

How much power will the Titan X Use?

Looking at the images of the GeForce GT Titan X we can see that it will use at most 350 Watts. This is based on the fact that it gets up to 75W of power from the PCIe Gen 3.0 x 16 slot on the motherboard and then up to 75W from the 6-pin PCIe power connector and possibly up to another 150W from the 8-pin PCIe connector along the top edge of the card. It could use less than this, but for a flagship GPU that has 8 billion transistors we expect it to be in the 225W to 250W TDP range.

Why does the Titan X have more black on it than the Titan Black of the previous series?

That is a great question, but we really dig the additional black colors as the silver design has been used for several generations. We still think NVIDIA could have pushed the design envelope a little bit though as they have been relying on the same GPU cooling technology and fan shroud for a fair amount of time. At the very least they could have put Titan X on the fan shroud instead of just Titan to help differentiate this model from the other Titan cards that are on the market already.

Why does the Titan X lack a backplate?

The internet is abuzz as to why the NVIDIA GeForce GTX Titan X doesnt have a backplate and many want to know why such a high-end card would lack something like this. We arent sure why it doesnt have one as it is surprising to see that NVIDIA dropped the backplate.

What is the Rumored Launch Date For Titan X?

What is the Rumored Launch Date For Titan X?

The leading rumor on the Internet is that the flagship card will be launching March 17th, 2015. This date sounds likely since it will be during the GPU Technology Conference and is there any better place than to roll out such a card? We do not expect this to be a paper launch.

How much will the NVIDIA GeForce GTX Titan X Cost?

Your guess it as good as ours when it comes to how much the NVIDIA Titan X will run, but it appears that this Titan-class card is aimed at just gamers this time around. That is great news as it means the price point should be lower and we expect it to be priced under $1000 due to this very reason. Other sites are reporting that the price will be between $999 and $1349, but for the sake of high-end gamers we hope it will be on the lower side

Will there be a lower cost version of the GTX Titan X?

Rumors of a GeForce GTX 980 TI have been floating around for months, so it wouldnt be too big of a surprise to see a cut down version of the GM200 GPU being used for a GTX 980 Ti. NVIDIA could always disable 2 or 4 SMMs and crank up the clock speeds to produce such a card. The only reason that NVIDIA might not do such a thing is because of what might happen to the frame buffer allocation. NVIDIA just had their feet held over the coals when it came to memory allocation and performance on the GeForce GTX 970 as it turns out the 4GB of memory wasnt working as originally explained by NVIDIA. At the end of the day there will be fallout from the GM200 Maxwell GPU binning and we think that it is highly likely NVIDIA will be using that for another card that could be price competitive with the AMD Radeon R9 390 series that is due out in June 2015.

Is The GeForce GTX Titan X Worth The Cost over a GeForce GTX 980?

The GeForce GTX Titan X is the Ferrari of the discrete graphics card world and is not a mass-produced card aimed at mainstream users. There is little competition for this card right now, so the mammoth price-point increase is just as justifiable as it is on any ultra-enthusiast class card. If you want a card that will outperform everything on the market, you are usually willing to pay top dollar to get that.

NVIDIA GeForce GTX Titan X or AMD Radeon R9 390X?

Not enough is known about either card to be able to answer that question, but we are shaping up to have some really great flagship cards on the market this summer.