Intel Pentium G3258 Dual Core Processor Gaming Performance

Tomb Raider

On March 5th, 2013 Square Enix released Tomb Raider, billed as a reboot of the franchise. In Tomb Raider, the player is confronted with a much younger Lara Croft who is shipwrecked and finds herself stranded on a mysterious island rife with danger, both natural and human. In contrast to the earlier games Croft is portrayed as vulnerable, acting out of necessity, desperation and sheer survival rather than for a greater cause or personal gain.

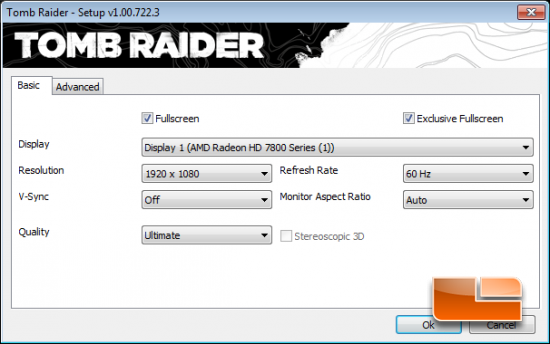

The game has been built on Crystal Dynamics’s game engine called the “Crystal Engine” and the graphics look fantastic. AMD and Crystal Dyanmic’s worked on a new technology called TressFX Hair, which AMD describes as the worlds first in-game implementation of a real-time, per-strand hair physics system for this game title. We set the image quality to ultimate for benchmarking, but we disabled TressFX Hair under the advanced tab to be fair to NVIDIA graphics cards that don’t support the feature.

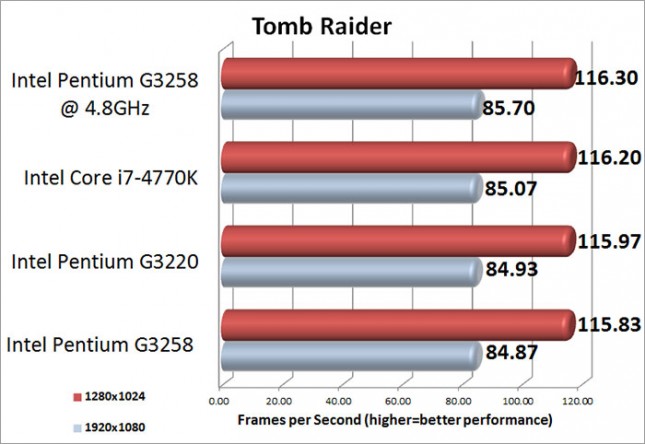

Benchmark Results: In Tomb Raider, there is very little performance difference between the various processors. The Intel Pentium G3258 was able to take the lead when overclocked with an average of 85.7 frames per second at 1920×1080 and 116.30 frames per second at 1280×1024. Between the stock clocks of the G3258 and the overclocked clocks, there is only .83 FPS difference at 1920×1080 and .47 FPS difference at 1280×1024.

Tomb Raider Medium Image Quality Settings

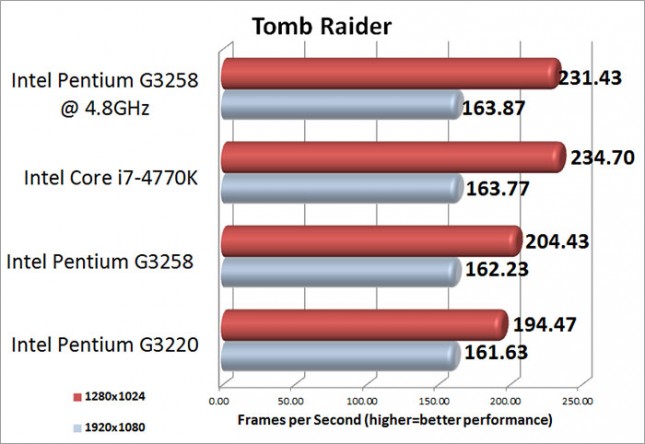

Benchmark Results: Tomb Raider doesn’t show off the same performance gains and differences that we have seen in the previous game titles. At 1920×1080 all of the processors were pretty close with only 2.24 frames per second separating them. At 1280×1024 the Intel Core i7-4770K took a slight lead over the overclocked Pentium G3258 which hit an average of 231.43 frames per second.