Intel IDF 2011 Closing Keynote – Intel CTO Justin Rattner

The 10 Year Goal For Many-Core Computing

The final day of the Intel Developer Forum always starts off with a keynote presentation that covers where Intel feels the future is headed. We love presentations like this as it gives us a peak at upcoming and developing technologies.

The second day of the Intel Developers Forum started with what looked likeMooly Eden, the company’s vice president and general manager, for a split second. Then we noticed that it was Justin Rattner in disguise.

Off came hat and the Intel CTO was ready for action.

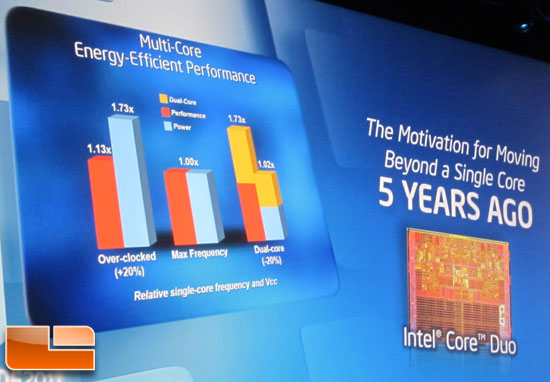

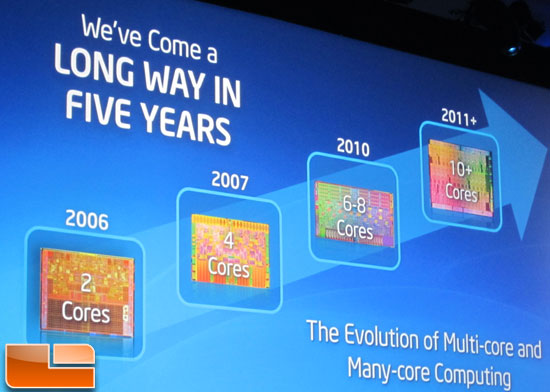

The Intel Core Duo micro-architecture was introduced five years ago and we have come a long way since that processor was introduced in 2006. The evolution of multi-core and many-core computing has come together rather quickly.

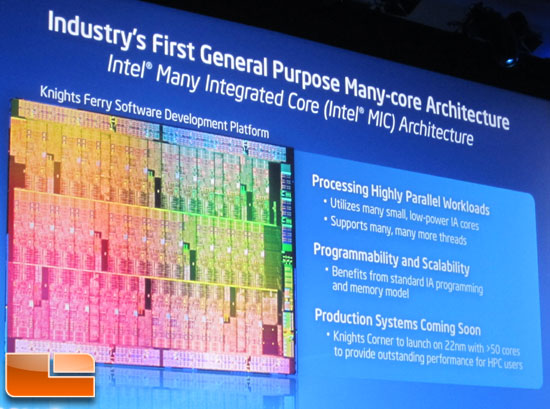

The next slide shows that we moved to dual-core processors in 2006 and are now up to 10s of cores with Knight’s Ferry. Intel will soon launch Knights Corner on 22nm technology with more than 50 cores in the near future later in what we can only expect to be 2012.

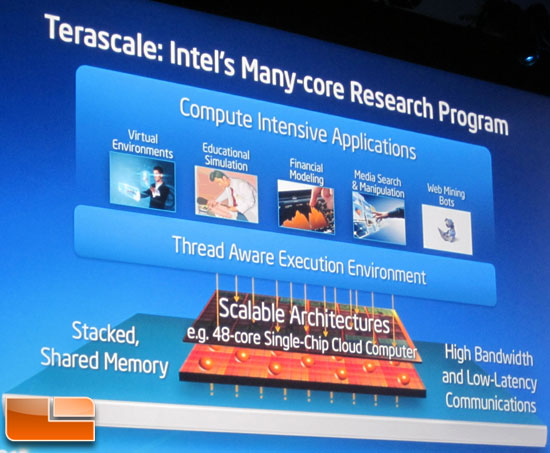

Intel’s Many-Core Research program is going full steam ahead and they are looking into areas like stacked and shared memory, new communications protocols like optical interconnects and other low-latency and high bandwidth solutions.

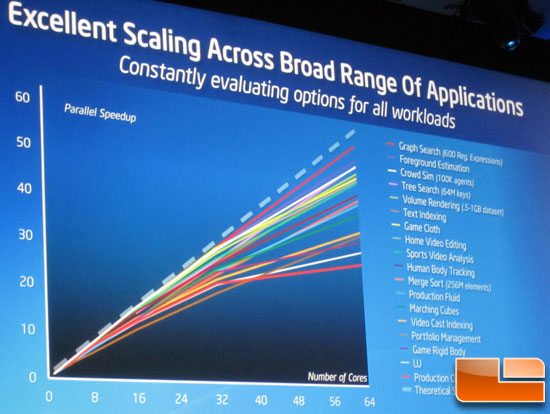

What Intel is seeing inside the research labs is a wide variety of workloads for many-core and they must be able to scale across this range of applications. Intel said they are seeing great scaling right now and nothing is really seeing under 30x scale up when running with 64 cores.

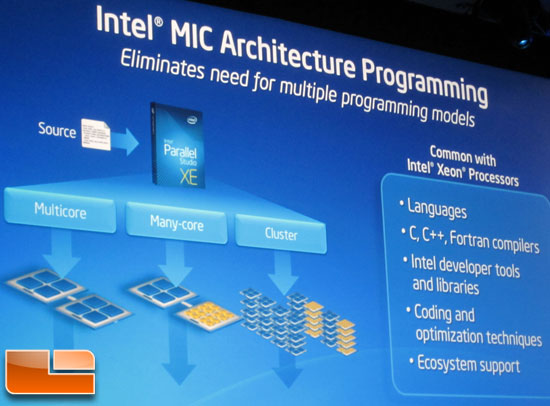

Intel is today introducing Intel Many Integrated Core (Intel MIC) architecture. The knights ferry is the software development platform for this technology and many developers already have this systme in their labs right now.

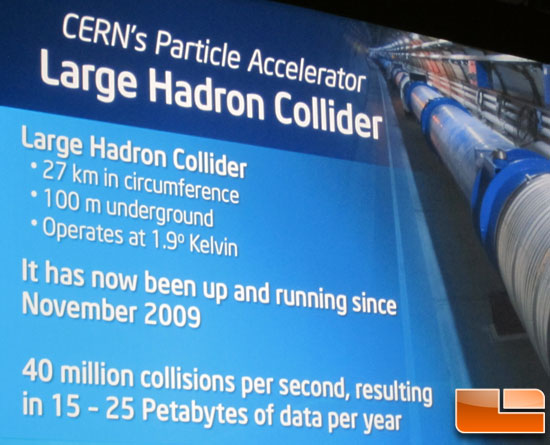

Andrezej Nowak from CERN openlab was brought on stage to talk about how how the Large Hadron Collider needs a ton of processing power to be able to process the data that each collision delivers. The focus of the CERN project is to figure out what is going on with the HIGGS particle. Intel reported that 15-25 petabytes of data is produced every year with the experiments at the facility.

That data must be analyzed and to do that the scientists need the best

and fastest processors to get value from the data as quickly as

possible. The current computer grid has 250,000 processing cores.

Comments are closed.