NVIDIA GPU Technology Conference Opening Keynote Highlights

Today, in San Jose, California, NVIDIA hosted their 6th Annual GPU Technology Conference in front of more than 4,000 attendees from 50 countries. Legit Reviews was there for a first-hand look at what new NVIDIA GPU technology is on its way and what things their mighty GPU is doing in the area of research and development. Thats right, folks, NVIDIA GPUs are used for more than just gaming. This years theme, Do you remember the Future? is geared towards the thousands of researchers around the world who are using NVIDIA GPUs for a variety of work in fields ranging from physics and astronomy, medical research, autonomous navigation, and of course, entertainment.

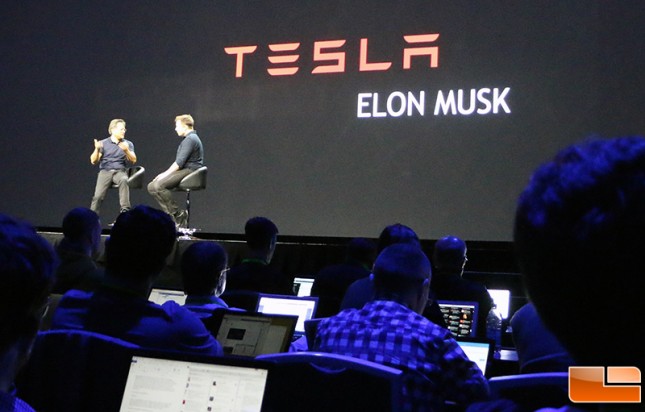

This event is the opening keynote address hosted by NVIDIA CEO Jen-Hsun Huang who is expected to deliver a sort of ‘State of the Union’ address for everyone and also introduce any new flagship product (Hello, TITAN X!) Besides NVIDIAs CEO, special guests include the founder of Tesla Motors and SpaceX, the one and only, Elon Musk.

This event is the opening keynote address hosted by NVIDIA CEO Jen-Hsun Huang who is expected to deliver a sort of ‘State of the Union’ address for everyone and also introduce any new flagship product (Hello, TITAN X!) Besides NVIDIAs CEO, special guests include the founder of Tesla Motors and SpaceX, the one and only, Elon Musk.

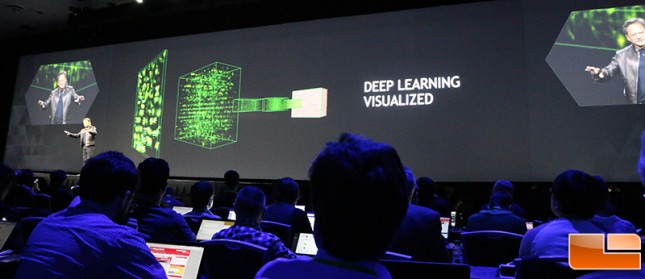

NVIDIA CEO Jen-Hsun Huang came out in his traditional Black looking quite comfortable in his leather jacket and button-up shirt. He announced that the opening session was going to cover four things: NVIDIAs newest GPU and Deep Learning; A very fast box and Deep Learning; NVIDIAs roadmap along with Deep Learning; and Self-Driving Cars featuring, yep, you guessed it, Deep Learning.

For those that dont know, Deep Learning is NVIDIAs take on machine or visual learning techniques for artificial intelligence. The idea is to model high-level abstractions or problems in data using various models related to pattern recognition, neural networks, and machine vision all tools that NVIDIAs GPU-accelerated CUDA architecture helps optimize.

Jen-Hsun Huang then gives a detailed view of the past year in visual computing showing gaming breakthroughs, automobile experience, and in enterprise, specifically with the NVIDIA GRID virtual desktop infrastructure (VDI). GRID-accelerated VDI lets graphic intensive applications stream to any connected screen no matter where the user is. Apparently, this technology is being used to make the new Star Wars movie.

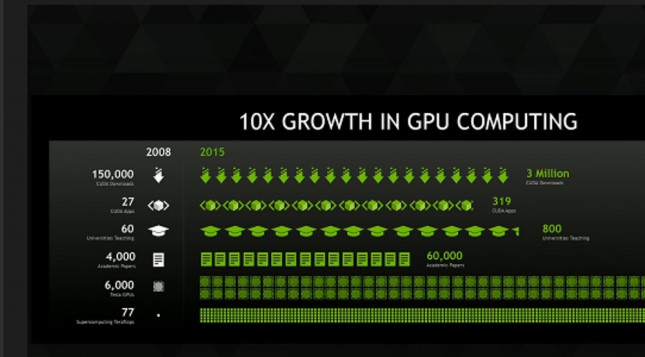

NVIDIA also highlighted the success of GPU Computing. In 2009, there were approximately 150,000 CUDA downloads. In 2014, there were 3 million CUDA downloads along with an exponential growth in CUDA applications, higher-education institutions teaching CUDA techniques as well as a vast number of whitepapers and scholastic journal articles written about CUDA GPUs.

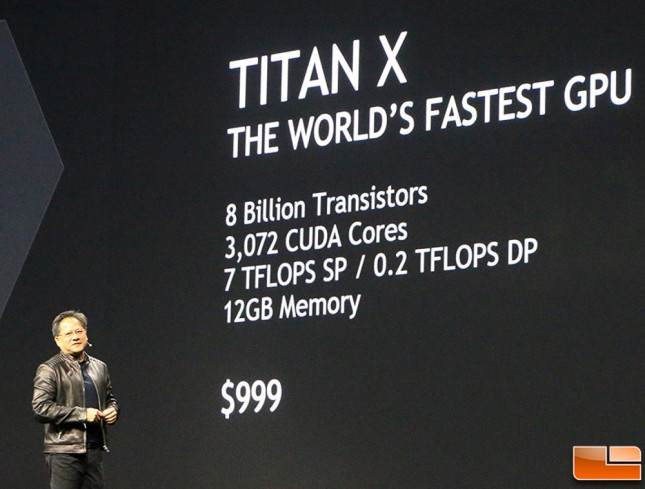

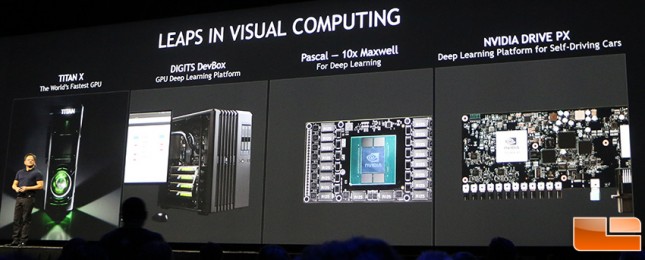

It was about this time that NVIDIA brought out the their latest GPU, the NVIDIA GeForce GTX TITAN X along with a very cool and slick video that introduced the new card. The NVIDIA TITAN X is based on Maxwell architecture and has 8 billion transistors, 3,072 CUDA cores, 7 teraflops of single-precision throughput, and a 12GB framebuffer. The MSRP of the new card will be $999.

NVIDIA ran an real-time animation called Red Kite produced by Epic Games that depicted a sprawling valley with over 13 million plants capturing 100 square miles of 3D graphics. The animation, running on a TITAN X, showed the raw GPU power of rendering realistic rocks, shadows, and water.

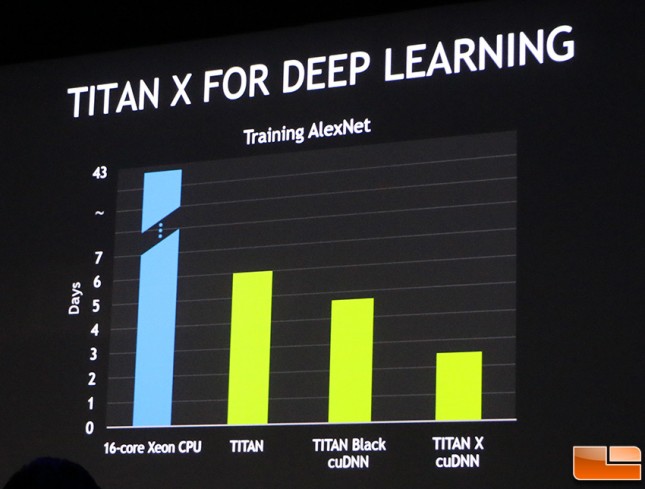

As NVIDIAs CEO Jen-Hsun Huang noted, the TITAN X is more than just a basic graphics card, its also about Deep Learning. When you speed something up from a month and a half and you reduce it to a week, its the difference between your willingness to do the work and maybe not at all. Down to three days is utterly life changing.”

NVIDIA introduced a researcher, Mike Houston, who took the audience on a journey explaining what AlexNet is and how it works. In short AlexNet is a neural network with over 60 million parameters to help train and identify images.

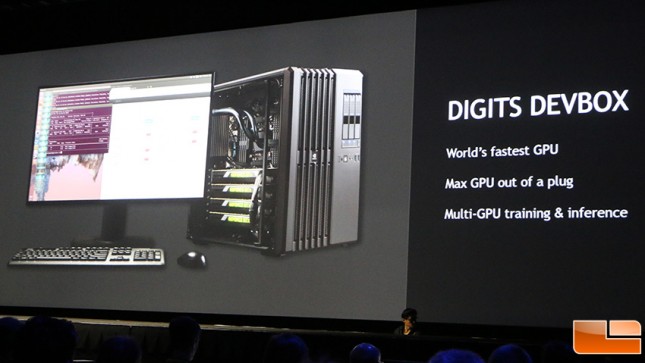

NVIDIAs next announcement was one surrounding their so-called Digits Devbox. This Devbox system offers four of NVIDIAs Titan X graphics cards in Quad-SLI arrangement making it one of the most powerful computational devices on the planet packed into a single enclosure.

Along with the GPUs, the Devbox includes an ASUS X99 motherboard with Intel Core i7 Haswell-E processor and up to 64GB of DDR4 RAM. All of this is driven by a power supply unit that delivers 1500 watts of power. This system is obviously designed for developers and runs Ubuntu 14.04 and NVIDA CUDA application software including Digits Framework to help researchers configure, monitor and analyze data from neural networks The Devbox system starts $15,000 and developers can sign up for access today.

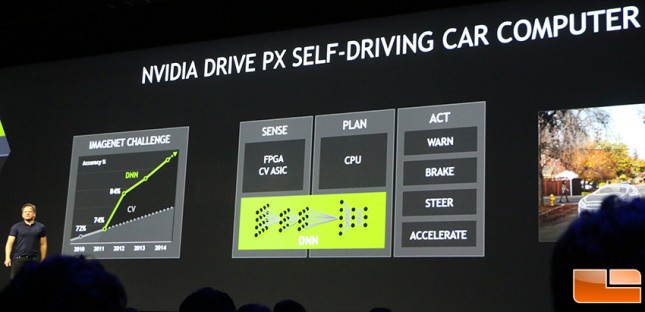

There will be a new developers kit for NVIDIAs self-driving automobile platform called Drive PX. This will go on sale in May for $10,000 and will be powered by two NVIDIA Tegra X1 processors.

Elon Musk came to the stage about an hour-and-a-half after the start of Huangs keynote presentation started and was met with warm applause. Musk, the founder of Telsa Motors and SpaceX stated that Self-Driving automobiles are a fairly easy problem to solve. Also, when people think of technology like autonomous vehicles, they need to think back at a time when we had elevator operators and now, elevators are automatic and people dont have any problems with using that technology.

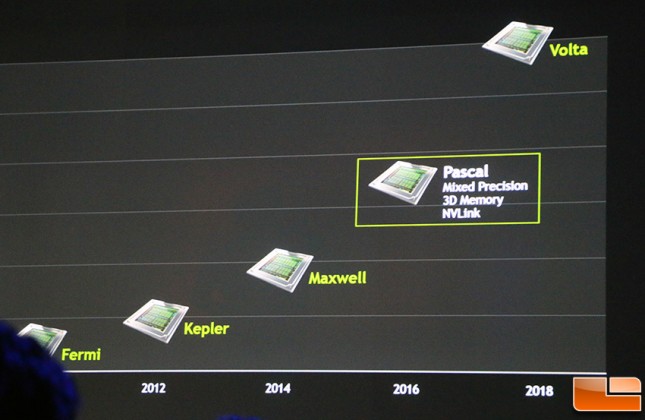

After 2 hours of presentation, NVIDIAs CEO Jen-Hsun Huang did a great job of showing the world the power of the GPU processing as well as set the foundation for future research and development. They are clearly leading the way with their CUDA technology, Titan X, Digits DevBox, Pascal Architecture, Drive PX and Deep Learning applications.

We will have lots more NVIDIA GTC coverage so stay tuned for more!