NVIDIA GeForce GTX 580 GF110 Fermi Video Card Review

Taking The GeForce GTX 580 Apart

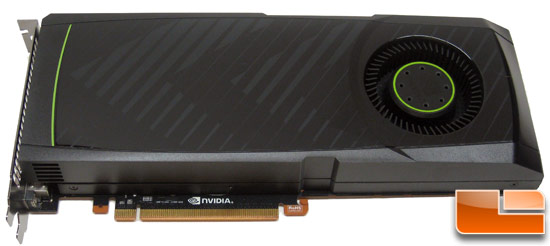

Since this is the first time I have actually seen a GeForce GTX 580 production card in person I had to take it apart to see what parts are being used. Okay, I really wanted to just see the GF110 GPU core and see what brand GDDR5 memory ICs were being used, but since I had to take it all the way apart I might as well walk you through the whole process. The front of the GeForce GTX 580 graphics card consists of a glossy black plastic shroud that is screwed onto a metal assembly.

The fan shroud on the NVIDIA GeForce GTX 480 just clipped on, but on the GeForce GTX 580 they held it down with six screws with thread locking compound on them. Once you get the screws off you can gently lift the cover off. This is as far as most people will ever need to go as you can easily use compressed air to blow the dust out of the fins if needed.

Here you can see how the airflow goes out the card and with the fan shroud on the majority of the hot air from the GPU is exhausted out the rear of the video card. This is very different from the GeForce GTX480 as it had a radiator plate on it and much of the heat remained inside the PC case.

Next you can remove the four larger Philips head spring screws as they

attach the heatsink to the video card. With the heatsink removed you can

see the core for the very first time! If you ever want to change out

the thermal paste this is how far you need to go.

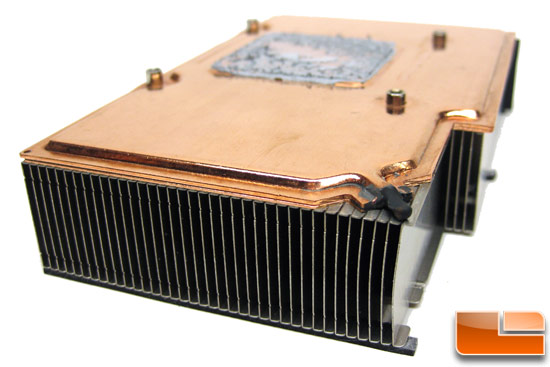

Here is a better look at the Vapor Chamber cooler that NVIDIA is using on the GeForce GTX580 to help keep it nice and cool. You can see the copper bottom and this is the part that contains the liquid that comes to a boil to help cool the GPU.

NVIDIA didn’t machine the entire base flat as our base was only partly machined (see a higher resolution picture here). NVIDIA said that they have set tolerances for the base and that our HSF base finish is up to specifications. We have a feeling that enthusiasts and overclockers will find lapping this heat sink will be an easy way to improve performance as ours was not perfectly flat by any means.

The next step is removing all 18 of the small screws that hold on the

aluminum frame! Once all of these screws are removed you can pull off the aluminum frame that helps cool the board’s components and doubles as the fan mount. Notice the thermal pads on the frame to help cool down the voltage regulators and the GDDR5 memory ICs.

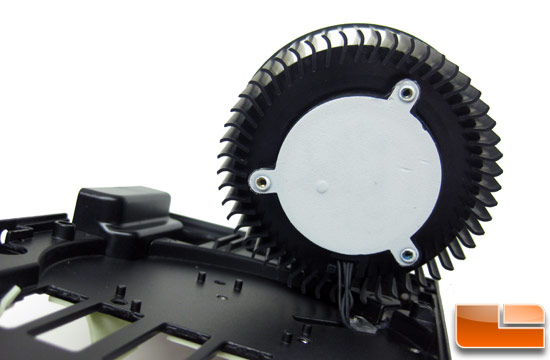

After the aluminum frame is removed you can take off the three Philips screws that hold the squirrel-cage fan down. Flipping it over we expected to see a brand name and model number like we did with the GTX480, but instead we see a foam pad to help keep noise levels down. As you can see NVIDIA has really tried to make the GTX580 quieter.

Now that the card if stripped down and pretty much bare we can take a good look at the heart of the GeForce GTX 580 and that would be the GF110 core shown above. The core is labeled as GF110-375-A1, which we are guessing would be an A1 stepping of the GF110 silicon. This GF110 GPU has all 512 cores enabled whereas the GF100 used on the GeForce GTX 580 only had 480 enabled due to various reasons.

Here is the picture that our readers always request to give you an idea of the core size next to something most people are familiar with, the United States quarter!

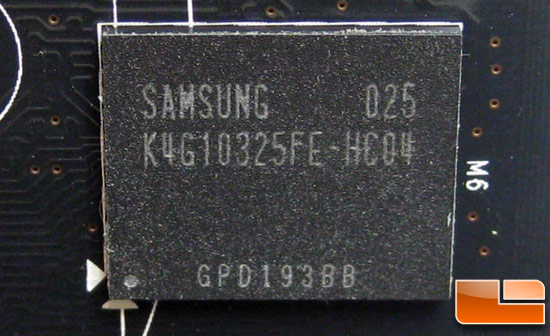

Located around the GF110 GPU core are twelve Samsung GDDR5 memory chips for the card’s memory. We were shocked to find that they are the same exact part numbers as what came with our NVIDIA GeForce GTX 480 reference card. The part number on the memory ICs is “K4G10325FE-HC04“. That means these are 0.40ns parts that are speed rated at 5Gbps, according to Samsung.

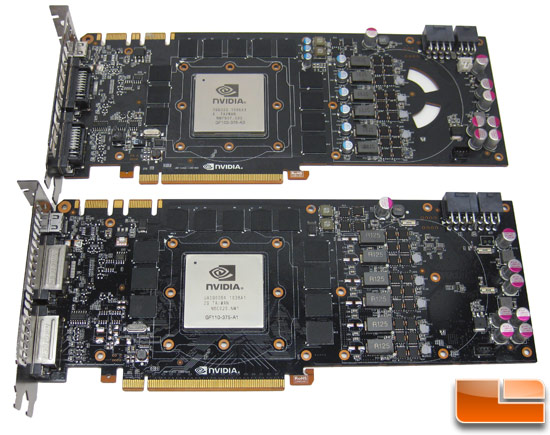

Here you can see the NVIDIA GeForce GTX 480 graphics cards on top and the GeForce GTX 580 graphics card on the bottom to get an idea of how they differ. As you can see, both cards use nearly identical 10.5″ Printed Circuit Boards (PCB) and have a layout that is shockingly similar if you ask me. The GeForce GTX 580 doesn’t have the solid-state capacitors located around the power phases and lacks the vent holes and the jumper underneath the power connectors. Other than that and a brand change on the DVI connectors the cards are nearly identical!

Comments are closed.