Force OGSSAA With NVIDIA Driver Trick

OGSSAA makes a monitor downsample a larger resolution, forcing anti-aliasing in applications that might not normally support it. OGSSAA stands for Ordered Grid Super-Sampling Anti-Aliasing and is actually one of the oldest forms of anti-aliasing; honestly it’s a simple explanation behind the function at its core. The guy’s over at Linus Tech Tips made a video detailing the process on NVIDIA cards. The process is also do-able, albeit slightly more convoluted with AMD cards.

[youtube]https://www.youtube.com/watch?v=hTszNDyuAhg[/youtube]

There’s really only two steps toward implementation:

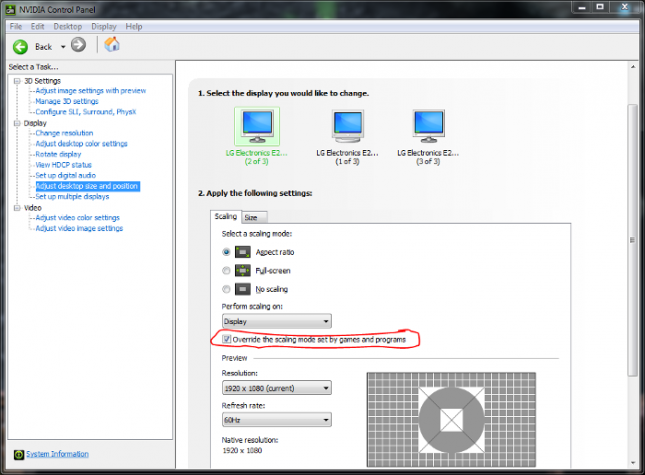

- Enable Override the scaling mode set by games and programs under Adjust desktop size and position

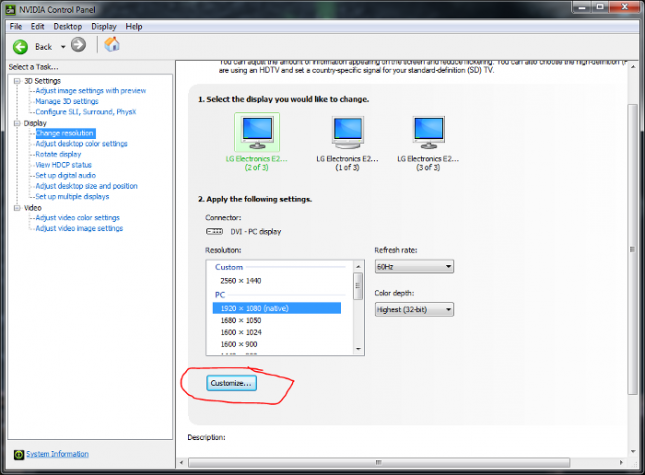

- Set a custom resolution under Change resolution

From this point it’s best to start at a reasonable resolution like 2560*1440 to test your monitors capability. Results will vary depending on GPU model and connection, connection plays a major role as VGA, DVI, HDMI, and DisplayPort have differing maximum resolutions. On dual-link DVI, I was eventually able to reach 3200*1800 with a 4GB EVGA Superclocked GTX 670; going any higher resulted in a black screen or driver crashes. If you’re using a newer DisplayPort and a high-end GPU, you should have no issue being able to push the 4K envelope.

Once the resolution is set and applied, the Windows desktop automatically corrects itself to the new resolution. Even at 1440p on a 1080p monitor, shortcut/file/folder texts starts to mix into an increasingly illegible mess of grey pixels and only worsens with higher resolutions. However, setting the Windows Control Panel resolution to the monitors native max leaves the desktop looking normal while being able to implement the resolution where it really matters: games! Starcraft 2 serves as an interesting benchmark for this solution as its only AA option is Blizzard’s infamous usage of a checkbox to apparently either use some kind of AA versus no AA at all.

Hardware used for testing:

- Intel 2600K @ 4.0GHz

- 16GB DDR3 @ 1600MHz

- SLI EVGA SC GTX 670 4GB

- Monitor: LG E2242

First, the resolution must be set in-game. The newly configured resolutions are listed just as a standard option regardless of the native resolutions of the monitor.

With the rest of the graphics settings also set to their respective maximums, but AA disabled, I cycled through the list. (All benchmarks were performed on the same map, with the same starting location, race, and no actions within the match to potentially throw off results.)

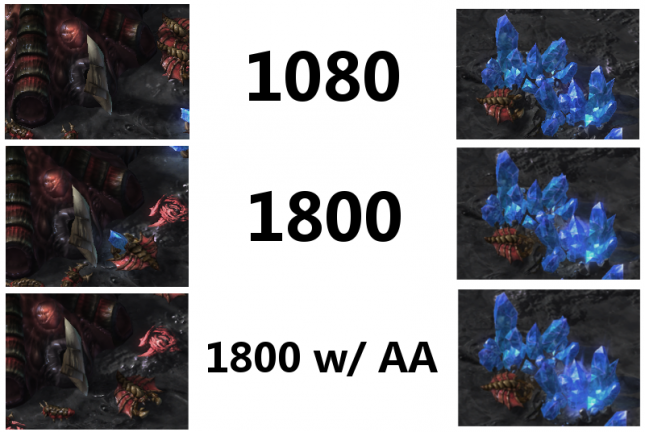

The effects are actually pretty impressive. Notice particularly, the ‘bone’ in the left side of the image and the clear stepping of pixels down the straight edges. On the right, the edges of the crystals completely disappear. GPU usage scaled evenly across the board: starting out with 65% GPU usage and 880MB VRAM allocation @1080p up through 80% GPU usage and 1170MB VRAM allocation @1800p. Gamers implementing Surround or Eyefinity are absolutly going to feel the hit if applying this to three monitors. Everything functioned perfectly in Starcraft 2, but maxing out settings and maintaining an average 100fps, why don’t we throw something a little more challenging at it? Battlefield 4 perhaps…

Framerates really started to drop as resource usage went through the roof. At 1080p with all settings maxxed out and MSAA*4, the test rig was ripping out 100+fps on the campaigns third chapter, South China Sea. However, the bump to 1440 cut frames down to about 77fps, and 45fps at 1800. VRAM utilization at this point was up to 2600MB. However, with every inching of resolution, screen tearing and a slight input lag slowly dissolved into an unplayable configuration. The downsampling also seems to fight the native AA with this kind of graphic fidelity as well.

Getting a close look at some of the more intricately shaped objects like the valve wheel on the left, smoothness seems to be giving way to blurriness. Some models almost look warped from so much post-processing. While it may not be great for every game, OGSSAA can provide higher fidelity for older games or applications which don’t currently support much in the way of AA. It’s also an excellent way to test to see if your setup is ready for higher resolutions like 1440/1600/4K!