Ashes of the Singularity DirectX 12 vs DirectX 11 Benchmark Performance

DX12 versus DX11 Game Testing

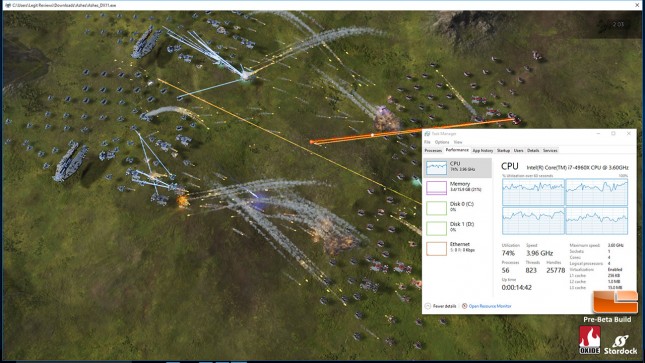

DirectX 11 CPU Usage – Ashes of the Singularity:

When running our Intel Core i7-4960X processor with just four active cores and no Intel Hyper-Threading enabled we found that we were at about 75% CPU load when running the Ashes of the Singularity benchmark in DirectX 11 mode.

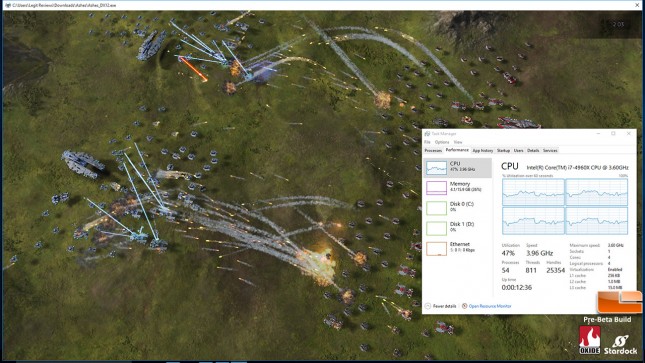

DirectX 12 CPU Usage – Ashes of the Singularity:

When we ran the DirectX 12 version of the benchmark we saw that the CPU load dropped to 50% and that the test systems power consumption at the wall was down by about 30 Watts . DX12 is supposed to better leverage all your processors available CPU threads to maximize performance instead putting the user-mode driver, DXGKernel, and Kernal-mode driver all on a single thread. By distributing the load across multiple threads and removing the kernel-mod driver from the workflow, it should help in-game performance by getting what the GPU needs to render the frames faster. GPU limited game titles won’t see huge performance improvements, but CPU limited game titles should see solid improvements.

DirectX 11 Driver Overhead – Ashes of the Singularity:

After the built-in game benchmark finishes running it gives you a benchmark graph that shows the driver overhead during the course of the benchmark run in orange. As you can see by clicking the image above the driver overhead is present and it differs in the various scenes over the three minute long benchmark when run in DirectX 11 mode.

DirectX 12 Driver Overhead – Ashes of the Singularity:

When running the DirectX 12 version of the benchmark we see that the driver overhead drops to basically nothing and stays like that over the course of the benchmark run, which is exactly what we expected to see with DX12 enabled. We also see that we are basically 100% GPU limited even with the Intel Core i7-4960X running at 3.6 GHz with just four cores active and Intel Hyper-Threading disabled.

This is what the developer has to say about the benchmark results:

Once the test has finished you will see a graph, which plots the values over the 180 second run. The driver overhead score is the percent of the total frame rate spent in driver, otherwise it is in FPS.

In the breakdown screen, the top 3 scores are the meta marks of the shots, those are the most important numbers. If we want to look at Raw GPU performance, the overall score or Normal Score is good, if we want to look at D3D12 performance, we recommend using the FPS score on the Heavy mark, which is also what we recommend for using as a CPU score.

In DX11, it is not possible to track some information like driver throughput or CPU score, for that reason, that data will not be provided during the benchmark

DATA POINTS EXPLAINED

Because we have more precise data with D3D12, we can provide some new data not provided before. The first data point, FPS is well known to everyone. Here is a list of what they mean:

- FPS. The average frames per second, averaged by the total runtime of the benchmark divided by the number of frames rendered

- Weighted FPS. Slow frames are weighted more than fast frames, with the equation being the square root of the sum of the square of all individual frame times.

- Percent GPU bound. The percent frames which were blocked by the GPU. If this number is near 100%, then a faster CPU would make no difference in the score

- CPU FPS. An estimated FPS based on how fast the system would have been if the system wasnt blocked by the GPU. That is, what would happen if we put a much faster GPU in the system. This number may be the true delta between DX11 and DX12. That is, if DX11 is CPU bound, then this number will indicate how much faster DX12 would be if we had GPU fast enough to keep up. This field is actually very accurate measure of CPU performance, especially on the Heavy Batches sub-benchmark.

- Batches per ms. Also known as draw calls, this number includes compute dispatch as well. This tells us roughly how fast the driver is processing commands

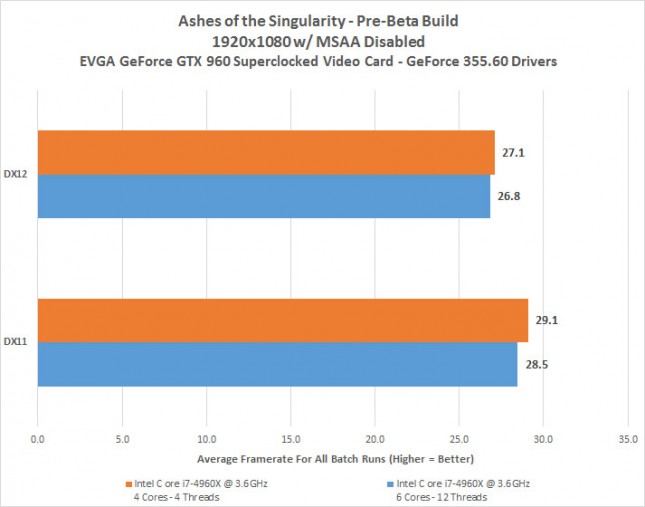

Let’s chart our results for you, so you can better see the differences.

The test results with the EVGA GeForce GTX 960 SSC 4GB video card running at 1920 x 1080p with MSAA disabled was that DX11 actually had a higher average frame rate that DX12. It didn’t matter if we had 4 threads or 12 threads on the Intel Core i7-4960X processor active. This is a bit shocking as when we looked at DirectX 12 performance improvements with Futuremark’s API Overhead Feature Test we found that the higher the thread-count you had meant you had higher DirectX 12 performance. We checked with the PR firm that Stardock is using and they confirmed that they are seeing similar results. Using one Alpha benchmark as an indicator of how DirectX 12 gaming performance looks compared to DirectX 11 didn’t feel too smart, so we stopped our testing here as we saw no point into spending more than a day benchmarking and writing this article up. Down the road Stardock plans on releasing new benchmark versions as the game matures and we can’t wait to see how those are look!

The day before the embargo lifted on these DX12 benchmark results we were told that Dan Baker, Co-Founder, Oxide Games put up a blog post addressing some of our DX12 concerns with his benchmark. He basically dismissed NVIDIA’s claims that the Ashes of the Singularity Benchmark has application side MSAA issues and said that it’s a video card driver issue and that AMD, Intel and NVIDIA have had access to this source code for over a year. Obviously, there is an issue somewhere, so we are comfortable with our decision to halt testing and wait for this all to blow over. You can read some the comments by Mr. Baker below.

There are incorrect statements regarding issues with MSAA. Specifically, that the application has a bug in it which precludes the validity of the test. We assure everyone that is absolutely not the case. Our code has been reviewed by Nvidia, Microsoft, AMD and Intel. It has passed the very thorough D3D12 validation system provided by Microsoft specifically designed to validate against incorrect usages. All IHVs have had access to our source code for over year, and we can confirm that both Nvidia and AMD compile our very latest changes on a daily basis and have been running our application in their labs for months. Fundamentally, the MSAA path is essentially unchanged in DX11 and DX12. Any statement which says there is a bug in the application should be disregarded as inaccurate information.

So what is going on then? Our analysis indicates that any D3D12 problems are quite mundane. New API, new drivers. Some optimizations that that the drivers are doing in DX11 just arent working in DX12 yet. Oxide believes it has identified some of the issues with MSAA and is working to implement work arounds on our code. This in no way affects the validity of a DX12 to DX12 test, as the same exact work load gets sent to everyones GPUs. This type of optimizations is just the nature of brand new APIs with immature drivers.

Immature drivers are nothing to concerned about. This is the simple fact that DirectX 12 is brand-new and it will take time for developers and graphics vendors to optimize their use of it. We remember the first days of DX11. Nothing worked, it was slower then DX9, buggy and so forth. It took years for it to be solidly better then previous technology. DirectX12, by contrast, is in far better shape then DX11 was at launch. Regardless of the hardware, DirectX 12 is a big win for PC gamers. It allows games to make full use of their graphics and CPU by eliminating the serialization of graphics commands between the processor and the graphics card.

I dont think anyone will be surprised when I say that DirectX 12 performance, on your hardware, will get better and better as drivers mature.

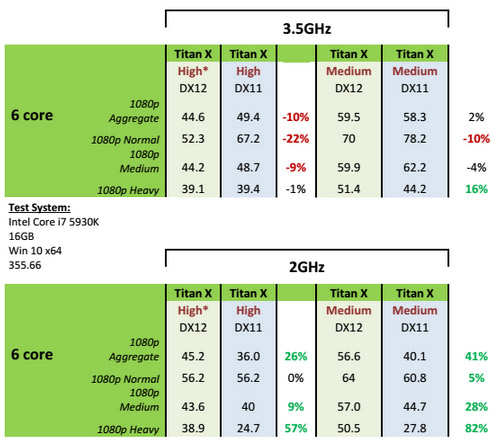

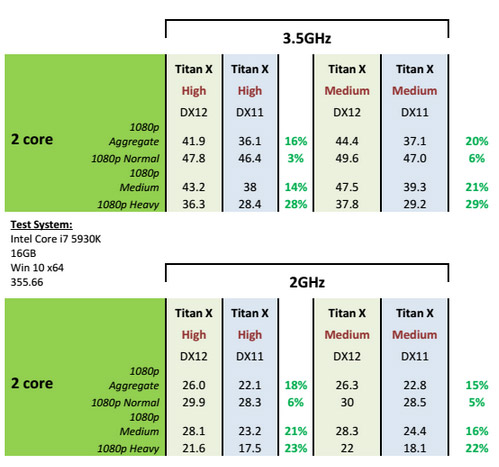

NVIDIA did some testing with a GeForce GTX Titan X with an Intel Core i7 5930K processor and saw that performance drop across the board when moving from DX11 to DX12 with high image quality settings. When they dropped the image quality down (even though they were averaging nearly 40 FPS on the 1080p Heavy test) they found they could get performance improvement by moving to DX12 on the 1080p Heavy test. Instead of reducing the number of cores on the processor with this card the team over at NVIDIA dropped to clock speed of the Intel Core i7-5930K from 3.5 GHz to 2.0 GHz and saw huge performance gains by going from DX11 to DX12. This is all fine and dandy and shows that DX12 is working, but it’s not realistic scenario for real world gamers. How many desktop gamers do you know that are running an Intel quad-core processor with clock speeds that low? It is really disappointing to see our results and then NVIDIA basically confirm them and then the test scenario that shows any benefit was to take a $1000 and put it on a 6-core processor and downclock the frequency to 2GHz in order to show that DX11 to DX12 performance gains can be had.

The only other test NVIDIA showed was dual-core performance and they found that some nice performance gains could be seen for on a dual-core platform, but again, how many dual-core gamers are left out there? The Intel Pentium Processor G3258 was a somewhat popular recently released dual-core processor that came out over a year ago and is clocked at 3.2 GHz. You can read our G3258 CPU review & gaming performance articles if you are interested in how that processor performed.

We talked with NVIDIA and AMD about the benchmark and both noted that this is alpha software and we took that as they felt it might not be an accurate measurement of DX12 at this point in time. The benchmark basically tells you how your system hardware will run a series of scenes from the alpha version of Ashes of the Singularity and that is about it. After talking with NVIDIA, they made it clear that they feel that there will be better examples of DirectX 12 performance coming out shortly that don’t have as many issues as we experienced in this benchmark.

It’s cool to see some DirectX game benchmarks coming out, but the results here are far from exciting and clearly there are performance issues that are related to either the video card drivers, application or a combination of both. These DX12 results are interesting to look at, but it’s obvious that DX12 is new and everyone doesn’t have it dialed in yet!

In closing, we did find out that Stardock/Oxide will be releasing the standalone Ashes of the Singularity benchmark that the public will be able to download and use for free in a few weeks. By that time we have a feeling many of the ‘issues’ we ran into will be solved and we’ll be able to look at performance on both AMD and NVIDIA graphics cards with the latest drivers and game build.