NVIDIA Shows-Off G-SYNC Technology on Starcraft at BlizzCon 2013

NVIDIA was showing off their brand-new G-SYNC technology this weekend at BlizzCon 2013. G-SYNC is a technology for gaming monitors to alleviate screen tearing and VSync input lag, and enhances the capabilities of existing monitor panels, resulting in the. In the past, NVIDIA has only featured G-SYNC running as a demo in Tomb Raider. At BlizzCon 2013, NVIDIA had G-SYNC set up on ASUS VG248QE monitors running Starcraft with a side-by-side a monitor that had V-Sync enabled on one and not the other. In person, you could definitely tell that the NVIDIA G-SYNC was making the real gameplay video smoother.

NVIDIA was showing off their brand-new G-SYNC technology this weekend at BlizzCon 2013. G-SYNC is a technology for gaming monitors to alleviate screen tearing and VSync input lag, and enhances the capabilities of existing monitor panels, resulting in the. In the past, NVIDIA has only featured G-SYNC running as a demo in Tomb Raider. At BlizzCon 2013, NVIDIA had G-SYNC set up on ASUS VG248QE monitors running Starcraft with a side-by-side a monitor that had V-Sync enabled on one and not the other. In person, you could definitely tell that the NVIDIA G-SYNC was making the real gameplay video smoother.

In the video, you will be able to see this tearing and the resulting G-SYNC correction. According to NVIDIA, G-SYNC is only waiting on hardware partners such as ASUS, BenQ, ViewSonic, and Phillips to roll out G-SYNC to consumers.

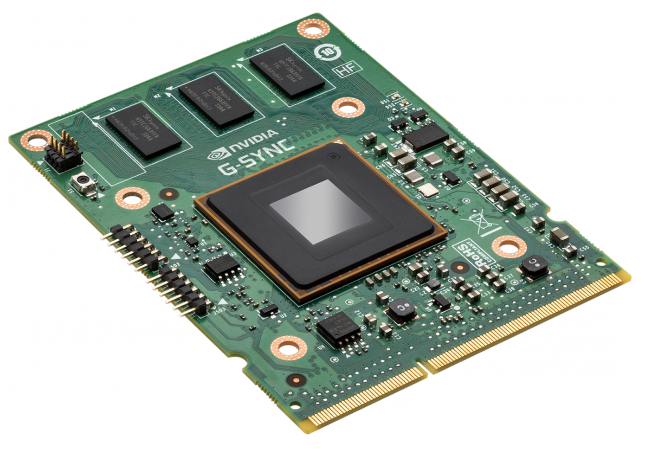

NVIDIA confirmed to us that will be offering G-SYNC do-it-yourself kits for the ASUS VG248QE monitor, which is a very popular 144Hz gaming display that has been out for nearly a year. The upgrade process requires about 30 minutes of time and just a Philips screwdriver. No word on the upgrade module price or release date just yet.