NVIDIA GeForce GTX Titan Video Card Preview

GPU Boost 2.0 Details

When we got our hands on our first NVIDIA Keplar card, the GeForce GTX 680, we had our first introduction to the NVIDIA GPU Boost. Now that NVIDIA is launching the Titan card, they are also showcasing the next generation of GPU Boost, GPU Boost 2.0. There are a number of similarities between the GPU Boost 1.0 and the new GPU Boost 2.0. Though if history tells us anything, a new version of software from NVIDIA is certainly going to work better than it previously did.

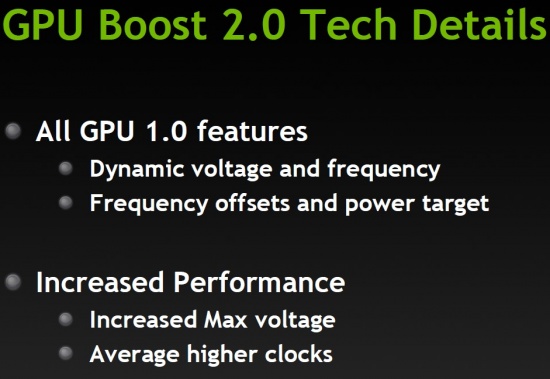

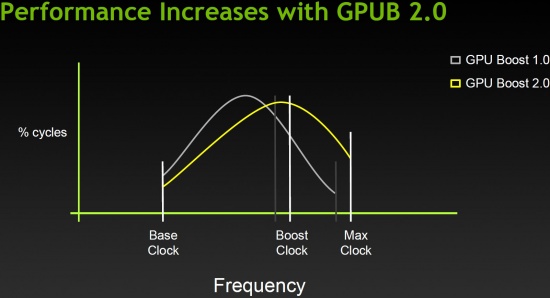

The overall concept behind GPU Boost 2.0 is in-line with the first version. Take the extra headroom available based on the load, and increase the core frequency. There are a number of similarities between the first version and BPU Boost 2.0. Both versions have dynamic voltage and frequency ranges that are dependent on the load, at least to a point. With the original GPU Boost 1.0 and graphics cards like the NVIDIA GeForce GTX 680, the boost clock was tied to the power range of the core. The Titan with GPU Boost 2.0 on the other hand, is tied to the temperature of the Core. The cooler that the GK110 core stays, the better the boost.

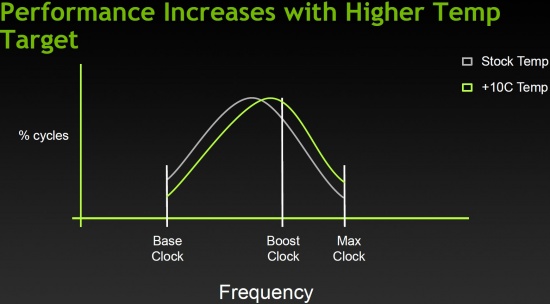

Above we can see the difference between GPU Boost 1.0 and the latest greatest GPU Boost 2.0. Due to the GPU Boost 1.0 hitting the thermal limits, the Boost clock frequency stops before the Boost clock in GPU Boost 2.0.

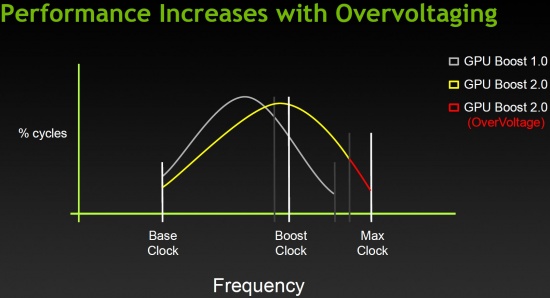

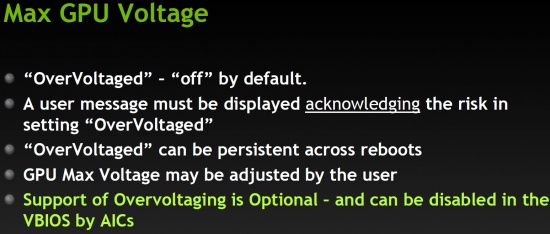

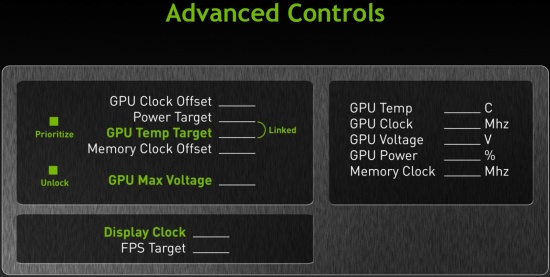

If we enable the OverVoltage option, we can see that the maximum clock speed of the Titan GPU will increase even further.

While the Titan GPU voltage can be ‘OverVoltaged’, it is set to Off by defaults. This option is for those who know the risk involved with pushing the voltage limits of the Silicon, and in order to use this feature a message pops up and must be acknowledged before continuing. It’s possible that not all vendors will support this feature and it can be disabled in the VBIOS from the factory.

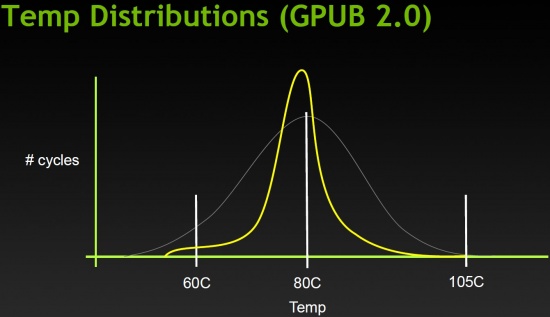

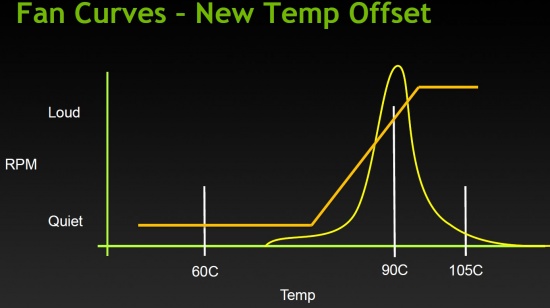

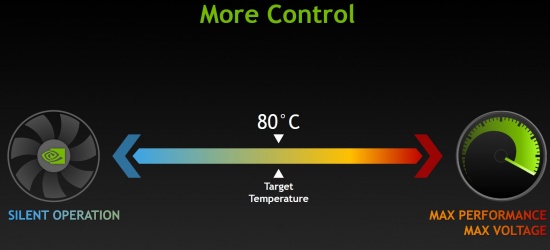

As a default, GPU Boost 2.0 works at a temperature range of 80 degrees Celsius. Above you can see that the target of the fan profile keeps the card right around 80 degrees. The tighter range we see above (the white line is GPU Boost 1.0) will keep acoustics down by keeping the fan speed consistent.

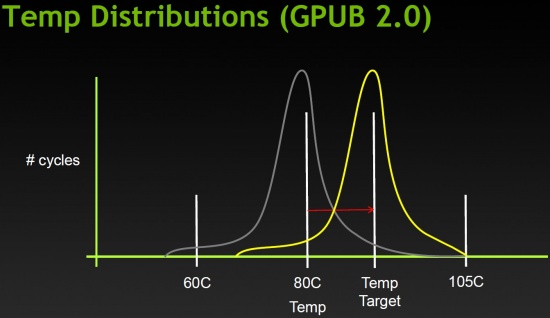

The target temperature can be adjusted, this will allow GPU Boost 2.0 to push the Titan to even higher frequencies until it hits the new temperature target.

With a higher target temp, the boost clock maximum also increases.

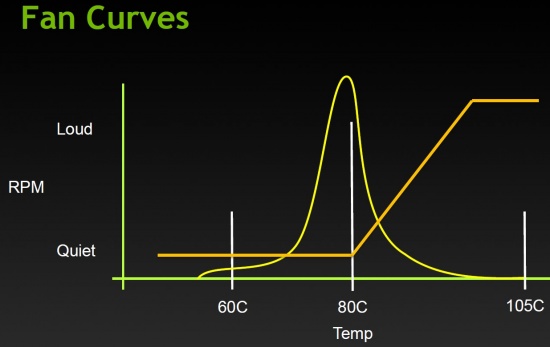

The tighter thermals of the Titan allow the fan to run at a lower speed for longer. This reduces the overall system noise and gives a better, quieter gaming experience.

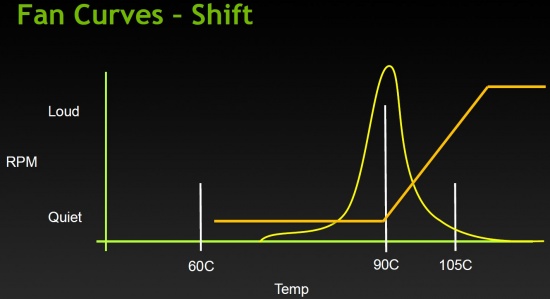

Sliding the target temperature up further we can see where it would fall into the original fan profile.

Once we adjust the target temperature, the fan curve will shift to match it.

GPU Boost 2.0 gives us greater control over the Titan GPU. We can set it for silent operation, maximum performance, or somewhere in between the two!

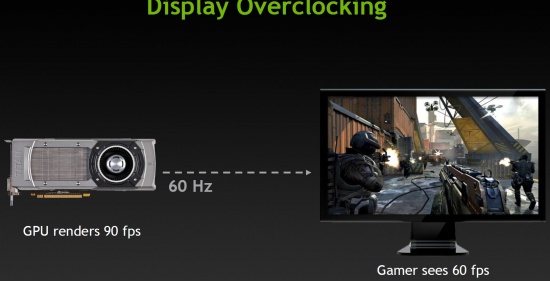

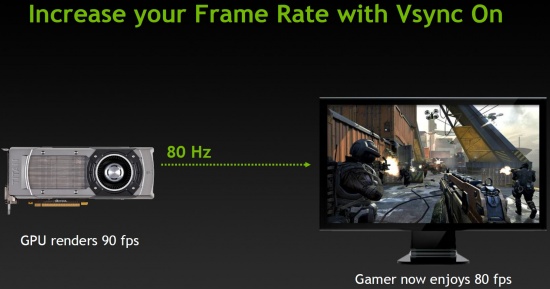

Regardless of the frames per second that your GPU of choice can crank out, you are ultimately limited by the refresh rate of your monitor. In the example given above, we can see that the GPU is rendering 90 frames per second but we are only seeing 60 frames per second when Vsync is enabled. Without VSync enabled we would see tearing. What if we could increase the refresh rate of our monitors?

Now we may just be able to do that. GPU Boost 2.0 has a feature that will allow ‘display overclocking’! Not all monitors will support this feature, but if your monitor will, you can increase the VSync frames per second.

The NVIDIA GeForce GTX Titan gives you more control over what your card is doing than any other video card ever released!

Come back and visit Legit Reviews for our performance review on the NVIDIA GeForce GTX Titan on Thursday!

Comments are closed.