Looking At DirectX 12 Performance – 3DMark API Overhead Feature Test

Gamers are more than excited about DirectX 12 coming to market later this year and after seeing what other low-level APIs like AMD Mantle could do with regards to lowering the API overhead we certainly understand why. AMD Mantle was the first API to greatly reduce CPU overhead that is caused by the command lists and buffers that DirectX needs for a GPU while rendering a game title. AMD was able to come up with a more efficient API that better utilized the CPU time demanded needed by having game developers to write code the better used the hardware in the system without relying on the API itself. AMD Mantle was pretty revolutionary a couple years back and while it might be transitioning into OpenGL Vulkan API and eventually disappear, no one can deny that it impacted the gaming market positively.

Once Microsoft releases Windows 10 later this year, we will begin seeing DirectX 12 game titles slowly coming out before the end of this year and DX12 performance might be a big factor in picking your next discrete desktop graphics card! Futuremark recently released a DirectX 12 feature test called the 3DMark API Overhead Feature Test that allows enthusiasts and gamers to look at the draw call benefits of the new DX12 API. The 3DMark API Overhead Feature Test should show how future game titles that were developed ‘to the metal’ perform. We expect that this will be beneficial to both console and PC gamers as it will mean better overall performance and hopefully game ports that don’t suck.

The benchmark is synthetic, but it should show how many draw calls the system being tested can handle. The benchmark also supports other APIs, so we can finally use a single benchmark utility to look at the difference between various DirectX 11 single-threaded, DirectX 11 multi-threaded, DirectX 12 and even Mantle! The 3DMark API Overhead test has a technical guide on how it works available online for those that would like to know more about the details. On a very high level it looks at not general-purpose GPU benchmark, and should not be used to compare graphics cards from different vendors. The test is designed to show the API overhead performance bottleneck by running thousands to millions of draw calls from the CPU to the GPU to draw and render an object in the scene. The benchmark API Overhead test draws as many individual buildingsas possible while keeping the GPU load at a bare minimum by using simple shaders and no lighting effects until the frame rate drops below 30 FPS. Once the frame rate drops below 30 frames per second, the number of draw calls per frame is kept constant and the average frame rate is measured over 3 seconds. This artificial scenario is unlikely to be found in games, but it should give us an idea of the performance gains that can be had from DX12.

3DMark API Overhead Feature Test Technical Details:

The test is designed to make API overhead the performance bottleneck. The test scene contains a large number of geometries. Each geometry is a unique, procedurally-generated, indexed mesh containing 112 -127 triangles.

The geometries are drawn with a simple shader, without post processing. The draw call count is increased further by drawing a mirror image of the geometry to the sky and using a shadow map for directional light.

The scene is drawn to an internal render target before being scaled to the back buffer. There is no frustum or occlusion culling to ensure that the API draw call overhead is always greater than the application side overhead generated by the rendering engine.

Starting from a small number of draw calls per frame, the test increases the number of draw calls in steps every 20 frames, following the figures in the table below.

To reduce memory usage and loading time, the test is divided into two parts. The second part starts at 98304 draw calls per frame and runs only if the first part is completed at more than 30 frames per second. Draw calls per frame Draw calls per frame increment per step Accumulated duration in frames per second.

Draw calls per frame Draw calls per frame increment per step Accumulated duration in frames 192 384 12 320 384 768 24 640 768 1536 48 960 1536 3072 96 1280 3072 6144 192 1600 6144 12288 384 1920 12288 24576 768 2240 24576 49152 1536 2560 49152 98304 3072 2880 98304 196608 6144 3200 196608 393216 12288 3520

Our test system was built around the following hardware:

- Intel Core i7-5960X Processor

- ASUS X99 Deluxe Motherboard

- 16GB Crucial Ballistix Elite DDR4 2666MHz Memory

- Corsair Hydro Series H105 CPU Water Cooler

- Gigabyte GeForce GTX 980 & Sapphire Radeon R9 290X

- Corsair Neutron XT 240GB SSD

- Corsair AXi 860 Power Supply

- Windows 10 Tech Preview Build 10041

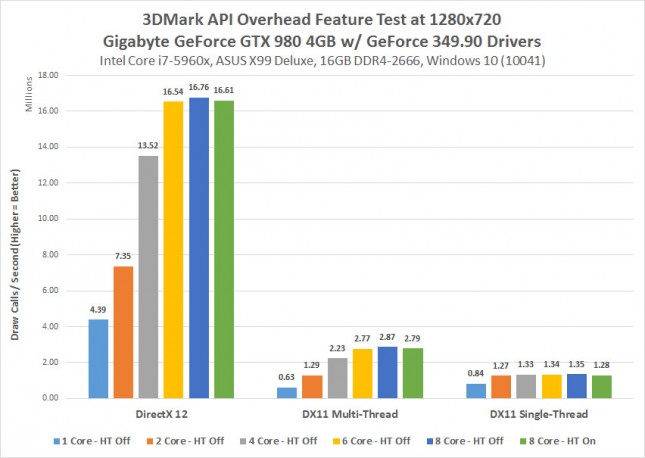

The first video card we looked at was the Gigabyte GeForce GTX 980 with GeForce 349.90 video card drivers. Our testing showed that on our Haswell-E test system that our 8-core processor with default settings performed as we expected it to. We has 1.28 million draw calls per second with DirectX 11 single-threading and that jumped up to 2.79 million draw calls with DirectX 11 multi-threading. On the DirectX 12 test we found 16.61M draw calls per second, which is insane. This is a nearly a 6x improvement with draw calls with just the API switch! DirectX 12 is going to offer substantial performance improvements in areas where a system was being bottlenecked by the API overhead.

We also looked at system performance with 1, 2, 4, 6 and 8 cores enabled as well as having Intel Hyper-Threading enabled and disabled. We discovered a few things that are important to note. For starters performance was better with Hyper-Threading disabled, so we left it off when we started to disable cores. We also fund that DX11 Multi-Threaded performance and DX12 API performance was best on a system with 6-8 CPU cores! DX11 single-threaded performance was basically the same when running 2-8 CPU cores. We saw an 18% performance drop on DX12 draw call performance when moving down to a quad-core processor and then from there we found another 46% performance drop when we moved down to a dual-core processor. If you are building a brand new gaming system and want the best performance possible it looks like you now have a reason to spend more for that 6-core or 8-core processor although we’d like to have some real DX12 game titles to try this out on first.

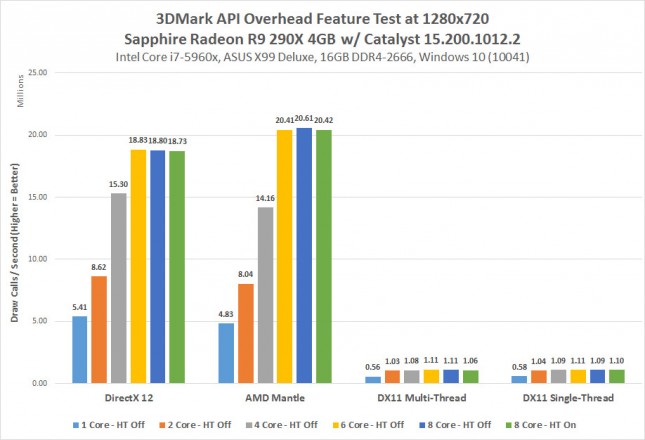

When moving over to the Sapphire Radeon R9 290X video card with AMD CATALYST 15.200.1012.2 drivers we are finally able to see Mantle versus DirectX 12! We got the highest number of draw calls per second with AMD Mantle! We hit 20.61M draw calls per second with the Radeon R9 290X and the AMD Mantle API with the Intel Core i7-5960X processor and HT disabled! This is more 3.85M more draw calls per second than the GeForce GTX 980 could reach, but remember Futuremark said not to compare GPUs with this test. The Radeon R9 290X showed no significant performance improvements were to be had by going from a DX11 single-threaded to the DX11 multi-threaded test, which really shocked us. Why was there no performance scaling to be had on DX11? We aren’t sure if the Windows 10 beta driver has support for this or not, but that is concerning.

The Radeon R9 290X performed best with 6 and 8-core processors, which is similar to what we saw with the GeForce GTX 980. We noticed that Mantle was able to perform better than DX12 on the 6 and 8-core tests, but DX12 was able to have more draw calls per second on the 1, 2 and 4-core tests. The performance difference between a quad-core and hex core processor were rather significant as we saw a 23% performance gain on DX12 and a 44% increase on Mantle by having a 6-core processor.

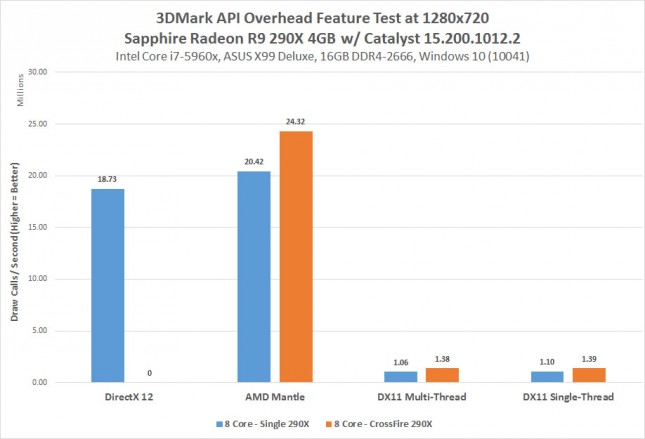

Update 3/30/2015 5pm CT: We had some readers ask about CrossFire performance, so we gave it a shot on two Radeon R9 290X 4GB video cards and found that DX12 was not supported in this early driver build for CrossFire. Both the Mantle and DX11 APIs saw significant performance improvements in 2-way CrossFire mode though. Our Radeon R9 290X CrossFire setup hit 24.32 Million Draw Calls Per Second with stock clock speeds! This is an increase of 3.9M calls per second, which works out to being a 19% improvement by adding a second card. Not the greatest gains we have ever seen from a look at CrossFire scaling, but there is some scaling to be had by adding a second card into the system.

Final Thoughts:

Our goal here was to take a quick look at DirectX 12 and how it is looking on a couple high-end cards from AMD and NVIDIA. We discovered that DirectX 12 is looking great according to the Futuermark 3DMark API Overhead Feature test that was just released last week. There are major improvements possible in API efficiency and it is clear that both DX12 and Mantle do a great job at releaving the bottleneck that was holding back performance for all these years. We won’t be seeing game performance increases by 6x, but there will most certainly be gains now that the main processor bottleneck is gone. With Microsoft DX12 and AMD Mantle it appears that the draw call performance issue is gone and that PC game developers are now free to create games that have many millions of draw calls a second without having to worry about any CPU bottleneck issues. It will be interesting to see how the Khronos Vulkan API performs down the road as well.

We also found out that Microsoft is controlling the graphics drivers for the Windows 10 Tech Preview and the only way to get the latest GPU drivers it to go through Windows Update. This is the first time ever that we needed to rely on Microsoft for drivers and for AMD and NVIDIA to tell us that they couldn’t give us GPU drivers? Microsoft removed GeForce 349.90 drivers during our testing period, so we found ourselves in the odd situation where Microsoft pulled the drivers and NVIDIA couldn’t send us anything. Is that going to be the new normal? No more downloadable driver updates direct from NVIDIA and AMD? We were told that this will not be happening, but you never know!