Intel Pentium G3220 Processor Review

Bioshock Infinite

BioShock Infinite is a first-person shooter video game developed by Irrational Games, and published by 2K Games. BioShock Infinite is the third installment in the BioShock series, and though it is not part of the storyline of previous BioShock games, it does feature similar gameplay concepts and themes. BioShock Infinite uses a Modified Unreal Engine 3 game engine and was released worldwide on March 26, 2013.

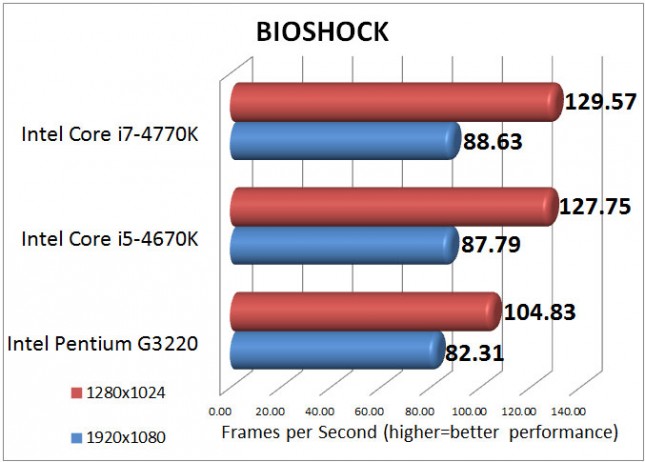

We tested BioShock Infinite with the Ultra game settings.

Benchmark Results: The gaming performance of the Intel Pentium G3220 is certainly better than I expected. At 1920×1080 the Pentium G3220 was slower than the other processors, but not nearly as much as I was expecting. The Pentium G3220 averaged 82.31 frames per seconds, only 6.3 fps behind the Intel Core i7-4770K average of 88.63 frames per second. Once we lowered the resolution to 1280×1024 the Intel Pentium G3220 lost some ground and averaged 104.83 frames per second compared to the 129.57 frames per second that the 4770K averaged.