The DFI LANParty NF4 SLI-DR

Graphics Tests….SLI or no SLI?

For graphics testing I used the latest NVidia drivers available at the NVidia website, Forceware drivers 66.93. While there are newer beta drivers available, I felt that using the latest certified drivers was a better approach as these represent what most people are currently using.

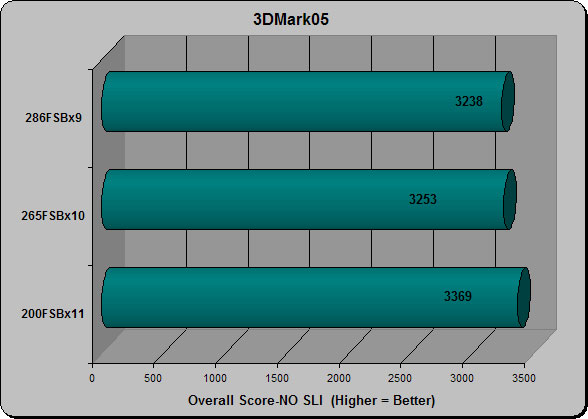

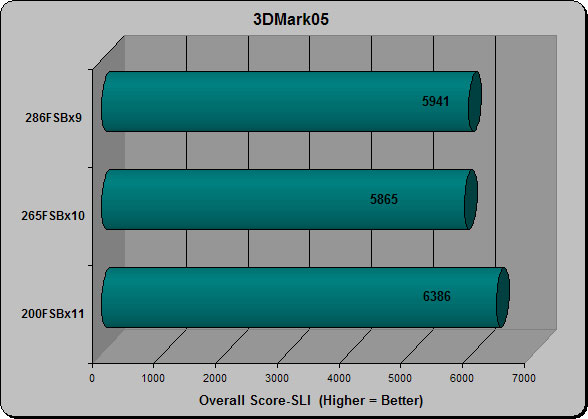

3DMark05

3DMark05 is a premium benchmark for evaluating the latest generation of gaming hardware. It is the first benchmark to require a DirectX9.0 compliant hardware with support for Pixel Shaders 2.0 or higher. Resolution was set to 1024×768.

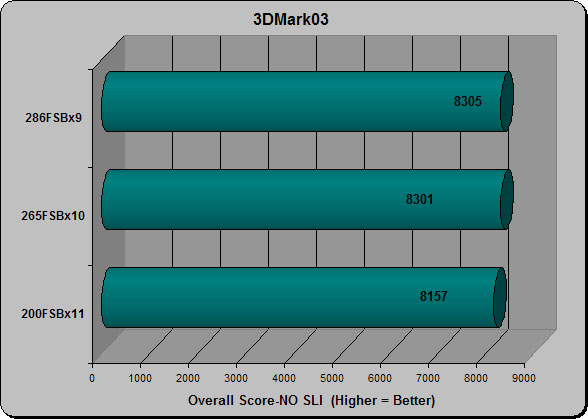

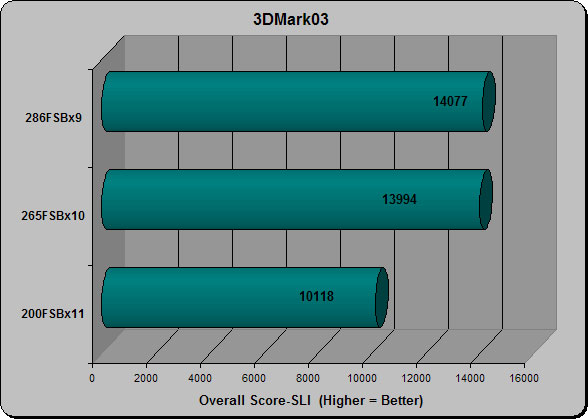

3DMark03

3DMark03 is a collection of four 3D gmae based tests. Each 3DMark03 game test is a real-time rendering of a 3D scenario. It is important to note that these renderings are not merely animations or a set of recorded events; they are designed to function like 3D games work. As with 3D games, all computations are performed in real time. This is a critical part of FutureMarks philosophy of 3D graphics benchmarking.

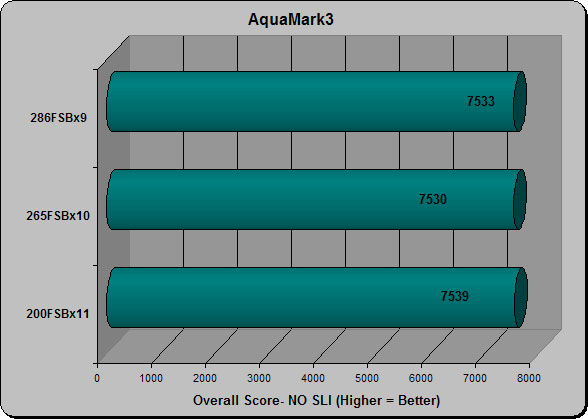

AquaMark3

AquaMark3 is a powerful tool to determine reliable information about the gaming performance of a computer system. Again, resolution was set 1024×768.

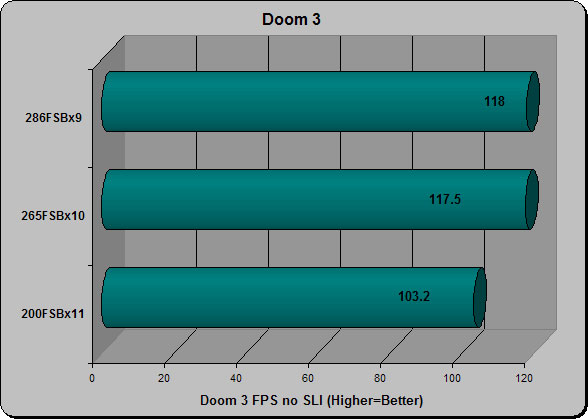

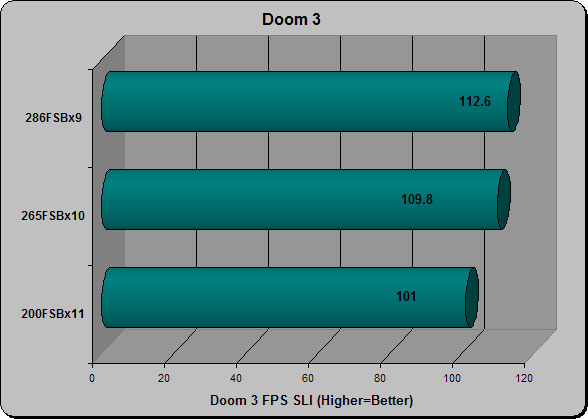

Doom 3

Doom 3 is one of the most system taxing games available. Its popularity also makes it a great choice for system benchmarking. I like to use Time Demo 1 with resolution set to 1024×768 with detail set to high.

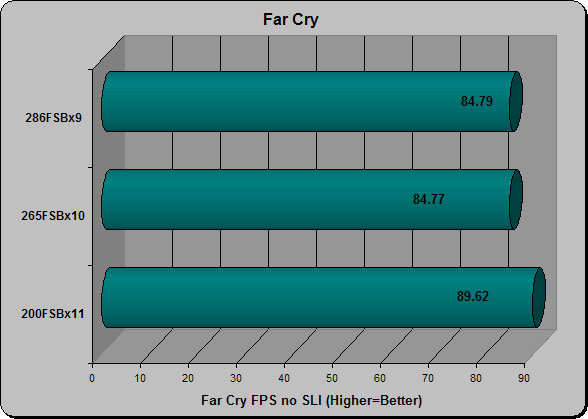

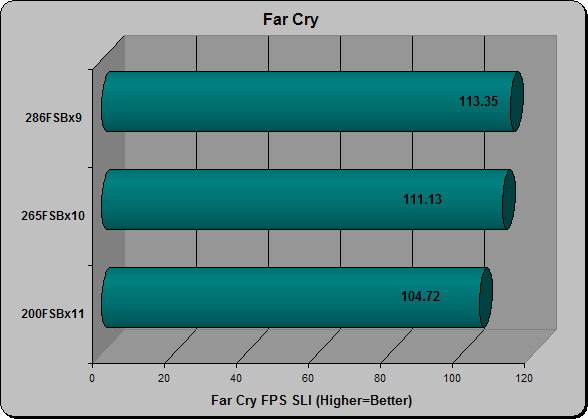

FarCry

Far Cry is another super popular FPS title that seriously taxes your systems graphics. HardwareOC developed this specialized benchmarking utility that automatically runs the test twice and averages out the score. For this test I used the “Volcano” benchmark with a resolution of 1024×768 and detail levels set to high.

When I first fired up this board to run in SLI mode I ran into a serious problem. Even though i was using a twin pair of XFX 6600GTs for testing, regardless of what I tried, the board recognized both cards but wouldn’t run in SLI mode. After scrathing my head for about 15 minutes, I realized that although these were identical cards, and therefore should be running at the same timings, they weren’t. One card’s default timings were 300MHz/1000MHz, while the other’s were 300/1200MHz. Thus, the cards wouldn’t run in SLI. I had to run each card individually first and manually set the timings to 300/1000 before they would run SLI….after doing it once I had no problems at all, freely switching between SLI and single card configuration without issue.

Another thing I noticed is that while as you can see, SLI does make a noticeable difference in applications that support it. While that is obvious, something else I noticed is that while in SLI mode, applications and benchmarks that measure CPU utilization as well as graphics, measured lower CPU scores across the board. My interpretation of this is that while SLI does prvide a performance boost to the systems graphics, it also stresses the CPU more by giving it more data to process.

Comments are closed.