AMD Radeon R9 Nano Versus NVIDIA GeForce GTX 980

By

Power Consumption

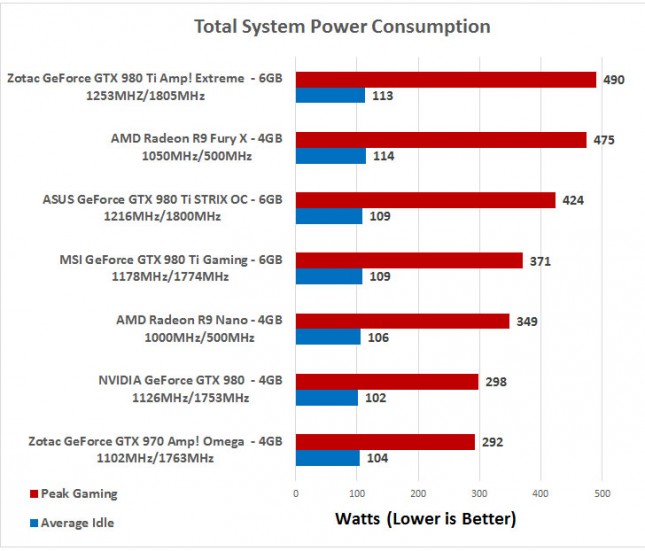

For testing power consumption, we took our test system and plugged it into a Kill-A-Watt power meter. For idle numbers, we allowed the system to idle on the desktop for 15 minutes and took the reading. For load numbers we ran Battlefield 4 at 3840×2160 and recorded the average idle reading and the peak gaming reading on the power meter.

Power Consumption Results: The AMD Radeon R9 Nano used 4 Watts more power at idle and ~50W more when gaming, so NVIDIA wins on the power front if you are concerned with power.

Let’s wrap this review up!