Adaptec RAID 3805 8-port SATA and SAS Controller Review

Sequential Reads

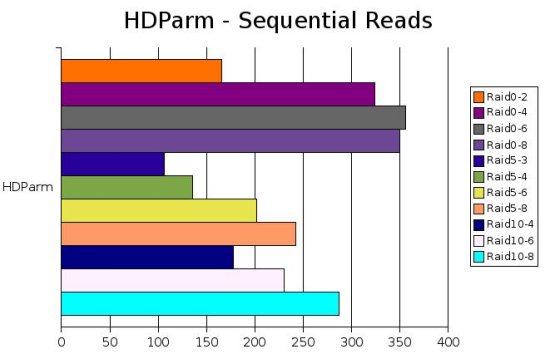

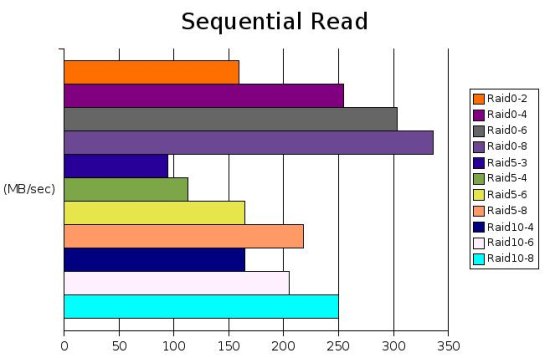

Hdparm is a simple hard drive configuration tool in linux. In the old days its how linux users would activate DMA and manually shut down drives. It also has a couple nice tests that show basic sequential read performance both on the drive itself and its cache. The cache speed is constant so it has been omitted.

What sticks out here is the raid0 performance. After we got past 4 drives and moved to 6 there was a fairly small difference in read speed. Moving to raid8 actually decreased our read performance mostly likely due to limits of the controller or the 4 lane bus. Getting 350MB/sec on a read is nearly 5x that of a single raptor which tops out at around 67MB/s.

The raid5 performance is fairly consistent as we add more drives. 4 drives yeilds about 135MB/sec which is pretty reasonable for most setups. To gain the performance of a simple Raid0 array however you must jump to 5 or 6 drives.

Raid10 performance was a little suprising. having 4 drives in raid10 is slightly faster than just 2 in raid0. This is likely just some experimental error. The Raid10-8 configuration however does significantly lag behind a straight raid0-4 setup. Apparently writing to all the mirrors swamped the card a bit. The space consumed by raid0-4 and raid10-8 is identical. How important is your data?

Firing up Bonnie++ also shows similar results to the hdparm test. The same slow down from the hdparm test is observed in the raid0-8 configuration. My guess is the controller has reached its processing limit in terms of bandwidth and drive count.

Comments are closed.